2. 桂林电子科技大学 信息与通信学院, 广西 桂林 541004

2. School of Information and Communication, Guilin University of Electronic Technology, Guilin Guangxi 541004, China

图像超分辨 (Super-Resolution, SR) 重建的目的是从一组或一幅低分辨率 (Low-Resolution, LR) 图像推测丢失的高频信息来重建高分辨率 (High-Resolution, HR) 图像[1]。单幅图像超分辨率 (Single Image SR, SISR) 重建算法可分为三大类:基于插值算法[2]、基于重建算法[3],以及基于学习的算法[4-10]。由于基于学习的算法的重建效果更优, 大多数学者的研究都是建立在这个基础上。目前, 学习算法通常是学习LR和HR图像块之间的映射关系。Chang等[6]提出的邻域嵌入算法是插值图像块 (Neighbor Embedding with Locally Linear Embedding, NE+LLE) 子空间。Yang等[4-5]提出的稀疏编码算法是利用稀疏表示关系来学习耦合字典。随机森林[8]以及卷积神经网络[9-10]也被应用于这个领域, 同时精度得到很大的提高。其中:Dong等[9]提出了基于卷积神经网络的超分辨率重建 (Learning a Deep Convolutional Network for Image SR), 成功地将深度学习技术引用到SR邻域中, 该算法系统称为SRCNN。其主要特征是以端对端的方式直接学习LR与HR图像块之间的映射, 只需极少量的预前和预后处理。而Yang等[4-7]提出的学习算法需要预处理过程, 即块的提取和整合, 同时这个过程需要分开处理。值得一提的是SRCNN算法的效果基本优于Yang等[4-5, 7]提出的算法。

但SRCNN依旧存在局限性。首先, 该网络学习到的特征少且单一; 再者该网络的学习速率低, 训练网络时间长。

SRCNN模型证明了直接学习LR-HR之间端到端映射的可行性, 因此可以推测增加更多的卷积层用于提取更多的特征可能提高SRCNN的重建效果, 但更深的网络难以训练且不易于收敛。因此本文引入了一种并列的网络结构, 该网络训练过程是并列互不干扰的。通过两路不同网络结构捕获更多不同的有效特征, 解决了SRCNN特征少且单一的问题。由于并列网络加宽了网络, 增加了参数个数和特征数量, 从而提高了模型重建效果。

为了解决模型复杂化的问题, 本文在卷积层后添加相对应的局部响应正则化 (Local Response Normalization, LRN) 层[10]。LRN模拟侧抑制, 迫使在特征映射中的特征以及相邻特征映射进行局部竞争, 使得所有输入特征映射都具有相似的方差。通过减少参数调整过程中不适定性带来的噪声干扰达到简明模型的参数的效果, 最终使得模型可以使用比SRCNN高10倍的学习速率进行训练。较高的学习速率能够使训练不易陷入局部极小值, 并能提高模型的收敛速度。在训练过程中, 本文使用的相同的学习速率。而SRCNN为了使模型能稳定地收敛, 因此在不同的层使用不同的学习速率。

1 基于并列网络的超分辨率重建模型本文引入分离层[13]构造并列网络模型。该模型加宽了网络, 增加了参数个数, 并有效地防止了过拟合现象;同时设计不同的两路网络结构捕获不同的有效特征, 更多的有效特征有利于提高重建效果。

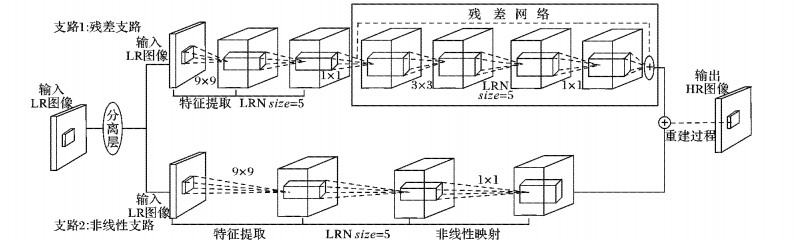

本文模型是由残差支路和非线性支路组成的并列模型。该并列网络的两路输入为相同的LR图像, 通过本文模型最终获得HR图像。整个网络结构的基本框架如图 1所示。

|

图 1 并列网络结构 Figure 1 Parallel network structure |

残差支路:分离层中的一个输出LR图像作为该支路的输入。首先用核大小为9×9的卷积层提取特征, 该特征提取层相当于是一个线性操作。激活函数Relu[15]对特征提取层输出的所有特征映射进行非线性处理, 并对该激活函数输出的所有特征映射进行LRN处理。最后将LRN的响应输出作为残差网络的输入。

非线性支路:该支路同样使用9×9的卷积核进行特征提取, 随之通过激活函数Relu对其输出映射进行非线性处理。添加LRN层, 对非线性处理后的所有特征映射处理, 最后将LRN的响应输出作为非线性层的输入。

增加卷积层可以提高网络模型的重建性能, 但滤波器参数的增加会增加网络的训练时间。因此网络支路2选用非线性网络, 是为了在不增加网络复杂度的情况下增加网络的非线性能力, 并相对提高了网络的重建质量。

值得一提的是, 残差支路和非线性支路的训练过程是互不干扰的, 这避免了其中一条支路的网络参数值不适用于另一支路网络的问题。同时, 网络结构由两条不同的支路构成, 这有利于两条支路捕捉不同的有效特征, 以便在重建过程中能够拥有更多的有效信息, 从而重建得到与原图更相似的HR图像。两条支路和单条支路相比, 加宽了网络, 使得参数增加, 特征数量也增加, 同时还能有效防止过拟合。

重建层:将残差网络的输出特征和非线性层输出特征相加进行特征融合, 并用融合后的所有特征映射用核大小为5×5卷积层重建得到HR图像。

2 分支网络设计与训练为了提高模型中各分支网络训练的收敛速度以及重建效果, 本文对网络结构、学习速率等方面进行了研究。在传统的深度学习训练中, 如果简单地设置高学习速率会导致梯度爆炸或梯度消失[14], 因此本文加入LRN层达到简明模型的效果, 解决训练过程中梯度消失及梯度爆炸问题,最终使得网络可以使用较高的学习速率学习整个网络。较高的学习速率使得梯度损失相对大, 同时参数的步伐相对大, 使得网络训练过程不易陷入局部极小值, 也相对减少调整参数的次数,从而提高网络训练的收敛速度。

2.1 卷积神经网络中的LRN (局部正则化)该层对输入的特征映射依次处理, 简明网络模型。公式如下所示:

| $ \boldsymbol{b}_{x, y}^{i}=\boldsymbol{a}_{x, y}^{i}/{{(k+\alpha \sum\limits_{j=\max (0, i-n/2)}^{\min (N-1, i+n/2)}{{{(\boldsymbol{a}_{x, y}^{j})}^{2}}})}^{\beta }} $ | (1) |

其中:ax, yi表示的是第i个核位置 (x, y) 的特征映射, 作为响应输入;N表示这层的滤波器个数总和;bx, yi为响应输出; k、n、α、β都为固定常量,k=1, n=5, α=10-4, β=0.75。

式 (1) 的原理是对每个特征图相邻的5幅特征图的每个特征图的每个相应的像素点处理, 然后求平均, 但并不引入另外的滤波器参数。该处理过程是模拟侧抑制, 对局部输入区域进行归一化。LRN通过对每次梯度下降调整后的参数再作一次约束处理, 减少相邻特征映射的参数方差, 达到减少每次参数调整过程中引入噪声的目的, 从而简化模型参数。简化后的模型朝着满足LRN约束条件的方向优化, 减少的噪声干扰解决了参数调整过程中梯度爆炸的问题, 从而使得网络模型能用较高的学习速率学习整个网络。

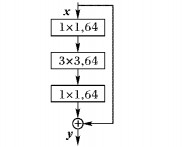

2.2 残差支路中的残差网络本文使用的简单的残差网络, 框架如图 2所示。

|

图 2 简单的残差网络 Figure 2 Simple residual network |

该网络的公式如下所示:

| $ \boldsymbol{y}=F(\boldsymbol{x}, \{{{\boldsymbol{W}}_{i}}\})+\boldsymbol{x} $ | (2) |

其中:x和y为残差网络的输入和输出;F(x, {Wi}) 表示的是网络学习到的残差映射,由图 2知该网络总共有三层;F=W3σ(W2σ(W1x)), σ表示Relu, 偏置省略了用来简化符号。F+x操作通过快捷连接和元素相加表示。

残差网络具有优化残差映射比优化原始映射更加容易的优点[16-17]。残差网络是快捷连接[18], 直接跳跃一层或多层, 因此残差网络优化网络参数的过程更加快捷。由图 2可知, 在整个连接过程中既没有增加额外的参数也没有增加网络的计算复杂度。整个网络的训练依旧采用随机梯度下降法。

考虑到网络支路的结构复杂度, 以及训练时间等因素。本文采用三层的残差网络,这三层分别为1×1, 3×3, 1×1的卷积层。在没有增加模型复杂性的基础上用1×1的卷积层增加网络的非线性能力。值得一提的是, 本文特地在中间层后添加了LRN层, 对上层输出的特征映射进行处理, 使得局部区域的特征映射相互竞争, 进行局部归一化, 达到简化模型参数的目的。

在残差网络中, 本文采用的补零方法保证图像维度一致。这也是选用简单的残差网络的原因, 如果选择的残差网络模型中的滤波器过大, 则补零就会越多, 这同时也增加了图像的噪声, 进而会降低图像重建质量。

2.3 网络训练本文依旧采用的最小化欧氏距离来优化模型参数得到最终的模型。给定训练数据集{x(i), y(i)}i=1N。其中:x(i)表示一组真实的高分辨率图像;y(i)表示一组低分辨率图像。本文的目标是学习模型

本文实验使用91张图像作为训练集, 测试集用由set4以及set5组成, 同时使用3倍放大因子进行训练, 以及估计图像效果。

本文算法和比较算法都是在相同的实验平台 (Intel CPU 3.20 GHz和8 GB内存) 上操作的, 应用的是Matlab R2014a以及Caffe。Caffe用于本文算法和SRCNN算法的网络训练, 其他几种算法不需要此过程。值得注意的是基于深度学习算法的实验要保持数据库一致, 避免了数据库大小对重建精度的影响。本文网络的输入图像为33×33的子图像, 子图像是在x(i)高分辨率图像集裁剪出来的。其中网络框架中特征提取层滤波器大小为9×9, 残差网络滤波器大小分别为1×1, 3×3, 1×1, 非线性层的滤波器设置为1×1, 重建层滤波器大小为5×5。除了重建层滤波器个数为1, 其他所有层滤波器个数均为64。以双三次插值Bicubic方法作为基准算法, 同时还选择基于稀疏编码的图像超分辨 (Sparse coding based Super Resolution, ScSR) 算法[5]、锚点邻域回归的 (Anchored Neighborhood Regression, ANR) 算法[7],以及SRCNN算法[9]作对比实验。实验中, 本文采用了花、蝴蝶、人脸等常用的图像测试, 此外待重建LR图尺度放大倍数s=3。

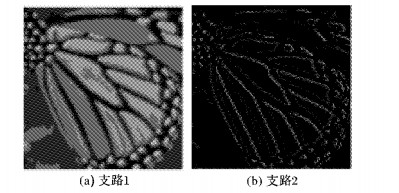

本文构造的是并列网络。并列网络简单的理解是加宽了网络, 增加了网络参数个数, 以及增加了特征数量, 能有效地提高重建视觉效果; 再者本文应用是两个不同的支路构成, 捕捉了不同的有效图像特征, 更多的有效特征也有利于提高重建质量。图 3为两个网络支路的任意的特征映射图。

|

图 3 两条支路特征映射图 Figure 3 Feature maps of two branches |

由图 3可知, 两条网络支路都获得了有效的特征信息:一条支路捕捉的是光滑信息, 另一条捕捉的是轮廓信息。这验证了本文提到的不同的网络结构可以捕捉不同的有效特征。

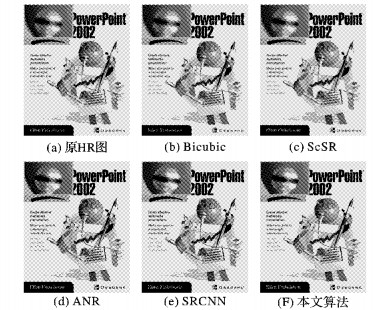

实验结果如图 4~5所示, 分别比较了bird、ppt3图用不同SR方法的重建结果, 考察全景图以及截取bird的眼角周围羽毛纹理和ppt3的话筒等细节部分。从视觉观测上来看, Bicubic基于平滑假设, 重建效果最差, 细节不明显, 图像模糊, 整体表明较为平滑。ScSR方法的部分细节重建效果好但bird眼角周围羽毛黑白交替边缘不够自然出现振铃现象; ANR算法中bird眼角周围和ppt3上的话筒相对较好, 细节细腻但出现部分伪影信息。SRCNN算法虽然比以上方法不论是从视觉还是从评估参数上都有较大提高, 但是在bird眼角周围羽毛的振铃状还是需改善。而本文方法在bird羽毛边缘的锐度和清晰度都得到明显的改善, 且重建的高频信息丰富, 视觉效果更好;同样从图 5 ppt3图像话筒的细节看出, 本文算法恢复的局部细节信息清晰、细腻, 整体效果与原始图像更接近。

|

图 4 bird原始HR及各方法重建结果对比 Figure 4 Comparison of original bird HR and reconstruction results of each method |

|

图 5 ppt3原始HR及各方法重建结果对比 Figure 5 Comparison of original ppt3 HR and reconstruction results of each method |

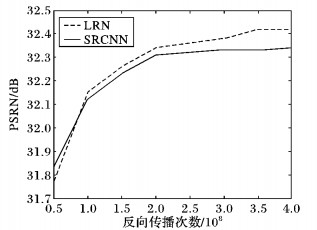

本文首先对网络的单支路加入LRN层作对比实验。实验结果如图 6所示,从中可看出对于单支路加入LRN层能相对提高模型的收敛速度。

|

图 6 单支路添加LRN与SRCNN比较曲线图 Figure 6 PSNR comparison of single branch adding LRN and SRCNN |

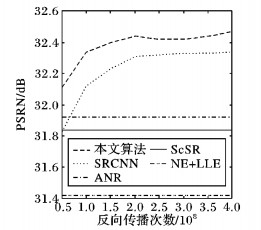

另外, 本文的两条网络支路结构都很简单:一支路选用特征提取层以及最简单的残差网络, 和原有的映射相比, 优化残差映射更容易; 另一支路只由特征提取层和非线性层构成。值得一提是, 特地添加LRN层主要用于简化网络参数, 减少参数调整过程中输入噪声的干扰, 以至于本文利用使用0.001的学习速率。较高的学习速率使得整个网络结构更容易收敛, 同时也有利于提高重建精度。此外, 本文还进行了收敛速度测试, 图 7展示了在数据集set5上的测试结果。由图 7可知对于传统算法而言, 不考虑收敛速度这个因素影响。因为这几个重建算法都是训练好字典后然后进行矩阵运算, 不存在网络训练反向传播次数这个因素的影响, 所以随着反向传播次数的增加, 它们的峰值信噪比 (Peak Signal-to-Noise Ratio, PSNR) 也保持不变, 最终它们PSNR呈现的是直线。同时观察到SRCNN算法重建精度优于其他几种算法, 而本文算法相对而言是最优的, 其PSNR平均高于SRCNN 0.2 dB, 这说明本文算法确实可行有效。本文可以在反向传播次数为2×108时, 测试set5平均值为32.42 dB就可以超过SRCNN在反向传播次数为8×108的32.39 dB的效果, 这说明高学习速率有利于提高模型的收敛速度;而本文算法不论是视觉效果还是参数估计都优于SRCNN。这说明高学习速率也是有利于重建质量, 实验结果如表 1~2所示。值得一提的是本文最终使用的反向传播次数是4.0×108而SRCNN使用的是8.0×108。

|

图 7 本文算法和其他几种算法测试set5收敛速度以及结果曲线图 Figure 7 Test set5 convergence rate and results curves of proposed algorithm and the comparison algorithms |

| 表 1 本文测试图像重建结果PSNR对比dB Table 1 Results of PSNR on the test images dB |

| 表 2 本文测试图像重建结果SSIM对比 Table 2 Results of SSIM on the test images |

本文提出了基于并列卷积网络的超分辨率重建方法。该网络证明了通过加宽网络可以捕捉更多不同的有效特征, 更多的有效特征信息有利于提高重建精度;同时还验证了通过LRN对局部输入变量归一化处理, 可相对减少输入噪声的干扰达到简化网络参数的目的。简化模型参数不仅可以增强网络模型拟合特征的能力, 而且使得网络模型可用更高的学习速率进行训练。较高的学习速率相对减少了参数调整的次数, 从而提高模型的收敛速度。本文不论是在主观重建效果还是客观评价参数上都有所提高。在接下来工作中研究的内容包括在更深的网络结构如何使用更高的学习速率收敛网络, 并通过增加网络深度提高重建精度。

| [1] | GLASNER D, BAGON S, IRANI M. Super-resolution from a single image[C]//Proceedings of the 2009 IEEE 12th International Conference on Computer Vision. Piscataway, NJ: IEEE, 2009: 349-356. |

| [2] | ZHANG D, WU X. An edge-guided image interpolation algorithm via directional filtering and data fusion[J]. IEEE Transactions on Image Processing, 2006, 15 (8) : 2226-2238. doi: 10.1109/TIP.2006.877407 |

| [3] | RASTI P, DEMIREL H, ANBARJAFARI G. Image resolution enhancement by using interpolation followed by iterative back projection[C]//Proceedings of the 201321st Signal Processing and Communications Applications Conference (SIU). Piscataway, NJ: IEEE, 2013: 1-4. |

| [4] | YANG J-C, WRIGHT J, HUANG T S, et al. Image super-resolution via sparse representation[J]. IEEE Transactions on Image Processing, 2010, 19 (11) : 2861-2873. doi: 10.1109/TIP.2010.2050625 |

| [5] | YANG J, WRIGHT J, HUANG T, et al. Image super-resolution as sparse representation of raw image patches[C]//Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ: IEEE, 2008: 1-8. |

| [6] | CHANG H, YEUNG D Y, XIONG Y. Super-resolution through neighbor embedding[C]//Proceedings of the 2004 Computer Vision and Pattern Recognition. Piscataway, NJ: IEEE, 2004, 1: Ⅰ-Ⅰ. |

| [7] | TIMOFTE R, SMET V, GOOL L. Anchored neighborhood regression for fast example-based super-resolution[C]//Proceedings of the 2013 IEEE International Conference on Computer Vision. Piscataway, NJ: IEEE, 2013: 1920-1927. |

| [8] | SCHULTER S, LEISTNER C, BISCHOF H. Fast and accurate image upscaling with super-resolution forests[C]//Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ: IEEE, 2015: 3791-3799. |

| [9] | DONG C, LOY C C, HE K, et al. Learning a deep convolutional network for image super-resolution[C]//Proceedings of the 13th European Conference on Computer Vision, LNCS 8692. Berlin: Springer, 2014: 184-199. |

| [10] | DONG C, LOY C C, HE K, et al. Image super-resolution using deep convolutional networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2016, 38 (2) : 295-307. doi: 10.1109/TPAMI.2015.2439281 |

| [11] | KRIZHEVSKY A, SUTSKEVER I, HINTON G E. ImageNet classification with deep convolutional neural networks[EB/OL].[2016-03-10]. https://papers.nips.cc/paper/4824-imagenet-classification-with-deep-convolutional-neural-networks.pdf. |

| [12] | SHANKAR S, ROBERTSON D, IOANNOU Y, et al. Refining architectures of deep convolutional neural networks[EB/OL].[2016-03-01]. https://arxiv.org/pdf/1604.06832v1.pdf. |

| [13] | NAIR V, HINTON G E. Rectified linear units improve restricted Boltzmann machines[EB/OL].[2016-03-01]. http://machinelearning.wustl.edu/mlpapers/paper_files/icml2010_NairH10.pdf. |

| [14] | BENGIO Y, SIMARD P, FRASCONI P. Learning long-term dependencies with gradient descent is difficult[J]. IEEE Transactions on Neural Networks, 1994, 5 (2) : 157-166. doi: 10.1109/72.279181 |

| [15] | HE K, ZHANG X, REN S, et al. Deep residual learning for image recognition[EB/OL].[2016-03-01]. https://arxiv.org/pdf/1512.03385v1.pdf. |

| [16] | SZEGEDY C, IOFFE S, VANHOUCKE V. Inception-v4, inception-ResNet and the impact of residual connections on learning[EB/OL].[2016-03-01]. https://arxiv.org/pdf/1602.07261.pdf. |

| [17] | BISHOP C M. Neural Networks for Pattern Recognition[M]. Oxford: Oxford University Press, 1995 . |

| [18] | LECUN Y, BOTTOU L, BENGIO Y, et al. Gradient-based learning applied to document recognition[J]. Proceedings of the IEEE, 1998, 86 (11) : 2278-2324. doi: 10.1109/5.726791 |