2. 智能信息处理与实时工业系统湖北省重点实验室 (武汉科技大学), 武汉 430065

2. Hubei Province Key Laboratory of Intelligent Information Processing and Real-time Industrial System (Wuhan University of Science and Technology), Wuhan Hubei 430065, China

当前, 现实世界中的很多应用都涉及多模态文件, 在多模态文件里信息由不同模态的数据组成, 例如一篇新闻文章由图片和相应的文字描述组成。在近几年来, 与多媒体相关的研究发展迅速, 其中跨媒体检索成为一个研究的热点。在跨媒体检索中, 如何挖掘不同类型数据之间的内在联系, 进而计算跨媒体数据之间的相似度, 是跨媒体检索需要解决的关键问题[1]。

为了解决这个问题, 典型相关性分析 (Canonical Correlation Analysis, CCA)、核典型相关性分析 (Kernel Canonical Correlation Analysis, KCCA)[2]、稀疏典型相关性分析 (Spase Canonical Correlation Analysis, SpaseCCA)、结构稀疏典型相关分析 (Structured Spase Canonical Correlation Analysis, Structured Spase CCA) 等围绕CCA的算法被提出用来实现不同模态数据之间的相互检索[3]。它们的主要思想是将不同模态的数据通过某种映射, 使得映射后的向量之间的皮尔逊相关系数最大。但是它们都没有有效利用不同模态数据的类别信息。为了利用数据的类别信息, 可以将图像特征或文本特征表达成视觉词袋 (Bag of Visual Words, BoVW) 或者单词词袋 (Bag of Words, BoW), 一些通过隐狄利克雷分布 (Latent Dirichlet Allocation, LDA)[4]来实现不同模态数据的关联建模的方法也相继被提出。为了进一步探究文本图像所蕴含的相关联语义[5-6], 多模态文档随机场等概率图模型方法也被用来对不同模态数据之间的关联关系建模[7]。最近由于深度学习[8]的兴起, 很多与深度学习相关的模型也被用于跨媒体检索[9-10], 如玻尔兹曼机[11]和卷积神经网络等深度学习模型。同时为了实现海量数据的高效检索, 一些基于哈希 (Hash)[12]的算法也被用于跨媒体检索的研究[13-15], 如局部敏感哈希 (Locality Sensitive Hashing, LSH) 算法、多视图哈希索引等。

本文为了探究不同模态数据之间的语义相关关系以及如何有效地利用数据的标签信息, 提出了一种新的基于潜语义主题加强的跨媒体检索算法。算法的主要流程如下:

1) 利用多分类逻辑回归对图像和文本进行分类, 得到分类模型, 然后利用分类模型计算图像和文本基于多分类的后验概率, 使用该后验概率向量表示图像和文本的潜语义主题。

2) 由于文本的潜语义主题比图像潜语义主题更加明晰, 为了使文本和图像的潜语义主题的相关性最大, 用文本潜语义主题正则化图像潜语义主题, 使图像和文本的潜语义主题趋于一致。

3) 利用皮尔逊相关系数来度量文本和图像向量之间的相似性, 实现图像和文本之间的相互检索。

实验结果表明, 本文方法能够有效地提高跨媒体检索的准确率。

1 提取图像和文本的潜语义主题利用多分类逻辑回归模型提取图像和文本的潜语义主题, 该模型也称softmax回归。Softmax回归是有监督学习算法, 通过训练数据建立模型, 然后利用模型估算测试数据基于每一个类别的后验概率。将m个已标记训练数据样本表示为{(x (1), y(1)), (x(2), y(2)), …, (x(m), y(m))}, 其中输入样本特征x(i)∈ Rn+1, n+1为输入文本或图像特征的维度;y(i)∈{1, 2, …, k}为对应类别的标记值, 不同的标签值对应不同的类别信息, k为总类别数。对于给定的测试输入X, 用假设函数针对每一个类别j估算出后验概率值p(y=j| x), 也就是计算x基于每一种分类结果出现的概率。因此, 假设函数会输出一个k维向量 (向量的各个元素之和为1) 来表示测试数据基于k个类别的估算概率值, 可用如下公式表示:

| $ \begin{array}{l} {\mathit{\boldsymbol{h}}_\mathit{\boldsymbol{\theta }}}\left( {{\mathit{\boldsymbol{x}}^{\left( i \right)}}} \right) = \left[\begin{array}{l} p\left( {{\mathit{\boldsymbol{y}}^{\left( i \right)}} = 1\left| {{\mathit{\boldsymbol{x}}^{\left( i \right)}};\mathit{\boldsymbol{\theta }}} \right.} \right)\\ p\left( {{\mathit{\boldsymbol{y}}^{\left( i \right)}} = 2\left| {{\mathit{\boldsymbol{x}}^{\left( i \right)}};e} \right.} \right)\\ \;\;\;\;\;\;\;\;\;\; \vdots \\ p\left( {{\mathit{\boldsymbol{y}}^{\left( i \right)}} = k\left| {{\mathit{\boldsymbol{x}}^{\left( i \right)}};\mathit{\boldsymbol{\theta }}} \right.} \right) \end{array} \right] = \\ \;\;\;\;\;\;\;\;\;\;\;\;\;\frac{1}{{\sum\limits_{j = 1}^k {\exp \left( {{\mathit{\boldsymbol{\theta }} ^\rm{T}}_j{\boldsymbol{x}^{\left( i \right)}}} \right)} }}\left[\begin{array}{l} {\rm{exp}}\left( {\mathit{\boldsymbol{\theta }}_1^{\rm{T}}{\mathit{\boldsymbol{x}}^{\left( i \right)}}} \right)\\ {\rm{exp}}\left( {\mathit{\boldsymbol{\theta }}_2^{\rm{T}}{\mathit{\boldsymbol{x}}^{\left( i \right)}}} \right)\\ \;\;\;\;\;\;\; \vdots \\ {\rm{exp}}\left( {\mathit{\boldsymbol{\theta }}_k^{\rm{T}}{\mathit{\boldsymbol{x}}^{\left( i \right)}}} \right) \end{array} \right] \end{array} $ | (1) |

其中: θ1, θ2, …, θk∈ Rn+1是模型的参数;

求解θ, 需要设置一个代价函数, 如果能够使用优化算法求解代价函数的最小值, 即可得到θ。通过在代价函数中添加一个权重衰减项, Softmax模型的代价函数可以表示为:

| $ \begin{array}{l} J\left( \mathit{\boldsymbol{\theta }} \right) =- \frac{1}{m}\left[{\sum\limits_{i = 1}^m {\sum\limits_{j = 1}^k {1\left\{ {{\mathit{\boldsymbol{y}}^{\left( i \right)}} = j} \right\}{\rm{log}}\frac{{{\rm{exp}}\left( {\mathit{\boldsymbol{\theta }}_j^{\rm{T}}{\mathit{\boldsymbol{x}}^{\left( i \right)}}} \right)}}{{\sum\limits_{l = 1}^k {{\rm{exp}}\left( {\mathit{\boldsymbol{\theta }}_l^{\rm{T}}{\mathit{\boldsymbol{x}}^{\left( i \right)}}} \right)} }}} } } \right]{\rm{ + }}\\ \;\;\;\;\;\;\;\;\;\;\frac{\lambda }{2}\sum\limits_{i = 1}^k {\sum\limits_{j = 0}^n {\mathit{\boldsymbol{\theta }}_{ij}^2} } \end{array} $ | (2) |

其中1{·}表示性函数, 其取值规则为:如“{}”里的表达式为真则取值1, 为假则取值0。加入了权重衰减项

求解得到θ后, 就可以得到文本和图像基于各个类别的后验概率, 用该后验概率向量表示图像和文本的潜语义主题。

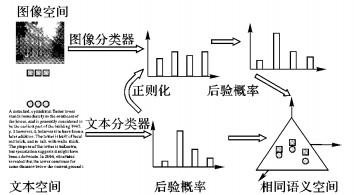

2 基于正则化的潜语义主题加强本文提出的基于潜语义主题加强的跨媒体检索算法, 就是在提取图像和文本潜语义主题的基础上, 为了使图像和文本的潜语义主题之间的相关性最大, 用正则化的方法对图像潜语义主题进行加强。算法流程如图 1所示。

|

图 1 基于潜语义主题加强的跨媒体检索算法 Figure 1 Latent semantic topic reinforce cross-media retrieval |

由于图像是在像素级别上的语义抽象, 通过多分类逻辑回归模型得到的潜语义主题并不明晰, 而文本得到潜语义主主题较为明确, 因此可用文本的潜语义主题来正则化图像的潜语义主题, 使图像的潜语义主题得到加强, 同时也使图像和文本的潜语义主题之间的关联性最大化。图像的潜语义主题用X=[x1, x2, …, xn]T∈ R n×k表示, 文本的潜语义主题用T=[t1, t2, …, tn]T∈ Rn×k表示。正则化图像就是使图像的潜语义主题X与文本的潜语义主题T所表达的语义主题尽可能地趋于相近, 即:

| $ \boldsymbol{H}:{\boldsymbol{x}_i} \to {\boldsymbol{t}_i} $ | (3) |

H为一个线性转换矩阵:

| $ \boldsymbol{T} = \boldsymbol{XH} $ | (4) |

把式 (4) 展开来为:

| $ \left( \begin{array}{l} {\boldsymbol{t}_1}^T\\ {\boldsymbol{t}_2}^T\\ \vdots \\ {\boldsymbol{t}_n}^T \end{array} \right) = \left( \begin{array}{l} {\boldsymbol{x}_1}^T\\ {\boldsymbol{x}_2}^T\\ \vdots \\ {\boldsymbol{x}_n}^T \end{array} \right)\left[{{\boldsymbol{h}_1}, {\boldsymbol{h}_2}, \ldots, {\boldsymbol{h}_k}} \right] $ | (5) |

其中: hi为H的列向量。用最小二乘算法来求得最优的H, 其约束条件为:

| $ {\boldsymbol{x}_i}^T{\boldsymbol{h}_k} \ge 0, \forall i = 1, 2, \ldots, N{\rm{;}}\forall k = 1, 2, \ldots, K $ | (6) |

| $ \sum {{\boldsymbol{x}_i}^{\rm{T}}\boldsymbol{H} = 1, \forall i = 1, 2, \ldots, K} $ | (7) |

式 (6)~(7) 是根据文本特征和图像特征, 经过多分类逻辑回归得到的基于各个类别的后验概率向量的元素之和为1, 且每一个的概率都大于0得来的。式 (4) 可以转换为最小二乘法的规范形式:

| $ \boldsymbol{b} = \boldsymbol{Mx} $ | (8) |

其中: b ∈ RNK, x ∈ RK2为列向量, M ∈ RNK×K2为稀疏矩阵。将式 (8) 展开为:

| $ \left[{\begin{array}{*{20}{c}} {{\mathit{\boldsymbol{t}}_1}}\\ {{\mathit{\boldsymbol{t}}_2}}\\ \vdots \\ {{\mathit{\boldsymbol{t}}_N}} \end{array}} \right] = \left[{\begin{array}{*{20}{c}} {\mathit{\boldsymbol{x}}_1^{\rm{T}}}&0& \cdots &0\\ 0&{\mathit{\boldsymbol{x}}_1^{\rm{T}}}& \cdots &0\\ \vdots &{\; \vdots }&{}&{\; \vdots }\\ 0&0& \cdots &{\mathit{\boldsymbol{x}}_1^{\rm{T}}}\\ {\mathit{\boldsymbol{x}}_2^{\rm{T}}}&0& \cdots &0\\ {\; \vdots }&{\; \vdots }&{}&{\; \vdots }\\ 0&0& \cdots &{\mathit{\boldsymbol{x}}_N^{\rm{T}}} \end{array}} \right]\left[{\begin{array}{*{20}{c}} {{\mathit{\boldsymbol{h}}_1}}\\ {{\mathit{\boldsymbol{h}}_2}}\\ \vdots \\ {{\mathit{\boldsymbol{h}}_L}} \end{array}} \right] $ | (9) |

为了表达成最小二乘的规范形式, 引进矩阵S ∈ R N×K2如下:

| $ \mathit{\boldsymbol{S}} = \left[{\begin{array}{*{20}{c}} {{\mathit{\boldsymbol{x}}_1}^T}&{{\mathit{\boldsymbol{x}}_1}^T}& \cdots &{{\mathit{\boldsymbol{x}}_1}^T}\\ {{\mathit{\boldsymbol{x}}_2}^T}&{{\mathit{\boldsymbol{x}}_2}^T}& \cdots &{{\mathit{\boldsymbol{x}}_2}^T}\\ \vdots & \vdots &{}& \vdots \\ {{\mathit{\boldsymbol{x}}_N}^T}&{{\mathit{\boldsymbol{x}}_N}^T}& \cdots &{{\mathit{\boldsymbol{x}}_N}^T} \end{array}} \right] $ | (10) |

则式 (4) 在式 (6)、(7) 的约束条件下可以表达为求解:

| $ {\mathit{\boldsymbol{x}}^*} = \mathop {{\rm{arg}}\;{\rm{min}}}\limits_\mathit{\boldsymbol{x}} {\left\| {\mathit{\boldsymbol{Mx}}-\mathit{\boldsymbol{b}}} \right\|_2}^2 $ | (11) |

| $ {\rm{s}}{\rm{.t}}{\rm{.}}\;\;\;\;\;\mathit{\boldsymbol{Mx}} \ge \mathit{\boldsymbol{0}}{\rm{ ; }}\mathit{\boldsymbol{Sx}} = \mathit{\boldsymbol{1}} $ |

根据式 (11) 即可求解得到正则化因子H。

整个算法的流程如下。

算法1 基于潜语义主题加强的跨媒体检索算法。

输入:带有类别信息的图像和文本, 统一表示为

1) 根据式 (1)、(2) 求解得到图像和文本的潜语义主题。

图像的潜语义主题: X=[x1, x2, …, xn]T

文本的潜语义主题: T=[t1, t2, …, tn]T

2) 对每一个类别 (i=1, 2, …, L) 求解:

根据式 (9)、(10) 中M、b、S的定义计算正则化矩阵。

输出:正则化矩阵H=[H1, H2, …, HL]。

图像的潜语义主题乘上正则化因子, 即可得到初步正则化的图像, 使用文本每一个类别的后验概率乘上初步正则化图像的每一类别的后验概率, 即可实现图像和文本的潜语义主题之间的关联最大化。

3 实验分析 3.1 实验数据集和数据表示为了验证本文算法的有效性, 实验在Wikipedia和TVGraz这两个跨媒体检索常用的数据集上进行。Wikipedia数据集包含2866个文本图像数据对, 这些文本图像数据对属于10个不同的类别, 实验随机选取2173个文本图像数据对作训练, 剩余693个图像文本数据对作测试。TVGraz包含2058个图像文本数据对, 这些图像文本数据对同样属于10个不同的类别, 实验采用1588个文本图像数据对做训练, 500个图像文本数据对测试。在所有实验中, 图像表示基于词袋 (BoW) 模型, 即用1024个视觉词码量化图像提取的SIFT (Scale-Invariant Feature Transform) 特征; 文本表示基于LDA模型, 即计算每个文本基于100个隐含主题的概率。

3.2 度量标准实验采用皮尔逊相关系数来度量特征向量之间的相似性, 通过相似性对检索结果进行排序, 将排序后的检索结果作为查询返回的结果。其计算公式如下:

| $ \begin{array}{l} {\rho _{x, y}} = \frac{{{\mathop{\rm cov}} \left( {\mathit{\boldsymbol{X}}, Y} \right)}}{{{\sigma _\mathit{\boldsymbol{X}}}{\sigma _Y}}} = \\ \;\;\;\;\;\;\;\;\;\frac{{E\left( {\left( {\mathit{\boldsymbol{X}}-{\mu _\mathit{\boldsymbol{X}}}} \right)\left( {Y-{\mu _Y}} \right)} \right)}}{{\sqrt {E\left( {{\mathit{\boldsymbol{X}}^2}} \right)-{E^2}\left( \mathit{\boldsymbol{X}} \right)\sqrt {E\left( {{Y^2}} \right) - {E^2}\left( Y \right)} } }} \end{array} $ |

实验采用平均查准率 (mean Average Precision, mAP) 和召回率对算法的性能进行评价。查准率 (Average Precision, AP) 的计算公式如下:

| $ AP = \frac{1}{L}\sum\limits_{r = 1}^R {prec\left( r \right)\delta \left( r \right)} $ |

其中:L为查询返回的结果中相关结果的个数;R为查询返回的结果总数;prec(r) 表示返回的结果在r处的排名精度;δ(r)=1表示返回的结果相关;δ(r)=0则表示返回的结果不相关。本文实验中R=10。

3.4 实验结果与分析在Wikipedia和TVGraz两个数据集上实验, 将本文提出的LSTR算法与主流跨媒体CCA算法、SM (Semantic Matching) 算法、SCM (Semantic Correlation Matching) 算法的平均查准率 (mAP) 进行对比, mAP为文本检索图像和图像检索文本的AP的平均值。对比情况如表 1所示。

| 表 1 Wikidedia和TVGraz数据集中算法性能对比 Table 1 Comparitions of cross-media retrieval performance on Wikideia and TVGraz datasets |

从表 1可以看出,本文算法性能明显高于对比算法的性能, 尤其是文本检索图像的平均查准率。其次, 从表 1不同数据集的对比可以看出, 在TVGraz数据集上各个算法的性能明显高于Wikipedia数据集。这是由于TVGraz数据集的图像都是一个特定的物体或动物类别比较明显, 而Wikipedia图像反映的内容则比较抽象, 类别属性模糊。

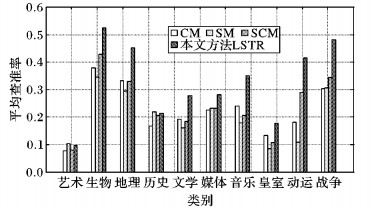

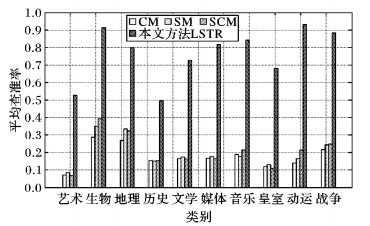

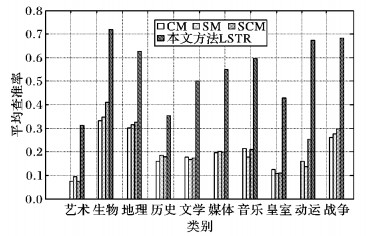

实验不仅对整体数据的平均查准率进行了分析, 还比较了不同类别的样例在Wikipedia数据集上的平均查准率。

图 2为图像检索文本的不同类别样例的平均查准率, 图 3为文本检索图像的不同类别样例的平均查准率 (), 图 4为不同类别样例平均查准率。对比图 2~4可看出, 本文算法的性能大幅度高于对比算法的性能, 特别是文本检索图像。这是因为文本检索图像时, 图像是被文本正则化之后的图像, 它的潜语义主题与文本的潜语义主题相近, 而图像检索文本时, 图像只是利用多分类回归模型提取的潜语义主题, 没有经过相应文本的正则化。

|

图 2 不同类别样例的平均查准率 (图像检索文本) Figure 2 mAP for different classes (retrieving text with image) |

|

图 3 不同类别样例的平均查准率 (文本检索图像) Figure 3 mAP for different classes (retrieving image with text) |

|

图 4 不同类别样例的平均查准率 Figure 4 mAP for different classes |

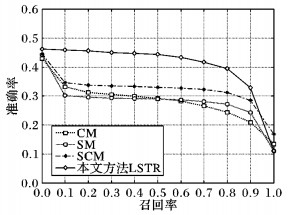

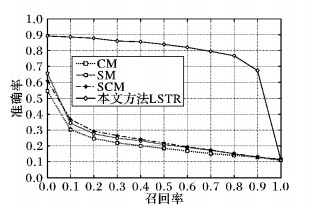

平均查准率只是信息检索算法性能评价的一个标准, 除了平均查准率, 实验还在Wikipedia数据集上对文本检索图像和图像检索文本的检索结果的准确率-召回率曲线进行了分析, 如图 5~6所示。

|

图 5 图像检索文本的准确率-召回率曲线 Figure 5 Precision-recall curves of retrieving text with image |

|

图 6 文本检索图像的准确率-召回率曲线 Figure 6 Precision-recall curves of retrieving image with text |

从图 5~6可看出:本文提出的LSTR算法随着召回率的增大, 其检索结果准确率的下降幅度比CCA、SM、SCM等跨媒体检索算法的下降幅度要平缓, 即本文算法的准确率受召回率的影响较小, 也就是当检索结果的召回率增大时, 本文算法仍能保持较高的准确率。

4 结语本文提出的基于潜语义加强的跨媒体检索算法, 在Wikipedia和TVGraz两个数据集上的实验, 验证了本文算法能有效提高跨媒体检索的查准率, 尤其是文本检索图像的查准率, 为跨媒体检索提供了一种新的思路:使用文本的语义去强化图像的语义, 使图像和文本的潜语义主题达到一致, 来实现图像和文本的相互检索。但另一方面, 本文算法在图像检索文本时查准率提高的幅度不大, 这是因为没有相应的文本对查询的图像进行语义加强, 图像和文本的关联没有最大化, 所以如何对无文本注释的图像加强其潜语义主题还有待进一步的探索。

| [1] | 吴飞, 庄越挺. 互联网跨媒体分析与检索:理论与算法[J]. 计算机辅助设计与图形学学报, 2010, 22 (1) : 1-9. ( WU F, ZHUANG Y T. Cross media analysis and retrieval on the Web: theory and algorithm[J]. Journal of Computer-Aided Design and Computer Graphics, 2010, 22 (1) : 1-9. doi: 10.3724/SP.J.1089.2010.10439 ) |

| [2] | CHEN X, LIU H, CARBONELL J G. Structured sparse canonical correlation analysis[EB/OL].[2016-03-10]. https://www.cs.cmu.edu/~jgc/StructuredSparseCanonicalCorrelationAnalysisAISTATS2012.pdf. |

| [3] | 张鸿, 吴飞, 庄越挺, 等. 一种基于内容相关性的跨媒体检索方法[J]. 计算机学报, 2008, 31 (5) : 820-826. ( ZHANG H, WU F, ZHUANG Y T, et al. Cross-media retrieval method based on content correlation[J]. Chinese Journal of Computers, 2008, 31 (5) : 820-826. ) |

| [4] | PUTTHIVIDHY D, ATTIAS H T, NAGARAJAN S S. Topic regression multi-modal latent Dirichlet allocation for image annotation[C]//Proceedings of the 2010 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ: IEEE, 2010: 3408-3415. |

| [5] | WU F, JIANG X, LI X, et al. , Cross-modal learning to rank via latent joint representation[J]. IEEE Transactions on Image Processing, 2015, 24 (5) : 1497-1509. doi: 10.1109/TIP.2015.2403240 |

| [6] | GONG Y, KE Q, ISARD M, et al. A multi-view embedding space for modeling Internet images, tags, and their semantics[J]. International Journal of Computer Vision, 2014, 106 (2) : 210-233. doi: 10.1007/s11263-013-0658-4 |

| [7] | ZHEN Y, YEUNG D Y. A probabilistic model for multimodal hash function learning[C]//KDD 2012: Proceedings of the 18th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. New York: ACM, 2012: 940-948. |

| [8] | SHANG X, ZHANG H, CHUA T-S. Deep learning generic features for cross-media retrieval[C]//MMM 2016: Proceedings of the 22nd International Conference on MultiMedia Modeling, LNCS 9516. Berlin: Springer, 2016: 264-275. |

| [9] | FROME A, CORRADO G, SHLENS J, et al. DeViSE: a deep visual-semantic embedding model[EB/OL].[2016-03-10]. https://papers.nips.cc/paper/5204-devise-a-deep-visual-semantic-embedding-model.pdf. |

| [10] | MA L, LU Z, SHANG L, et al. Multimodal convolutional neural networks for matching image and sentence[C]//Proceedings of the 2015 IEEE International Conference on Computer Vision. Piscataway, NJ: IEEE, 2015: 2623-2631. |

| [11] | SRIVASTAVA N, SALAKHUTDINOV R. Multimodal learning with deep Botzmann machines[EB/OL].[2016-03-10]. http://jmlr.org/papers/volume15/srivastava14b/srivastava14b.pdf. |

| [12] | WU F, YU Z, YI Y, et al. Sparse multi-modal hashing[J]. IEEE Transactions on Multimedia, 2014, 16 (2) : 427-439. doi: 10.1109/TMM.2013.2291214 |

| [13] | ZHUANG Y, YU Z, WANG W, et al. Cross-media hashing with neural networks[C]//MM 2014: Proceedings of the 22nd ACM International Conference on Multimedia. New York: ACM, 2014: 901-904. |

| [14] | RAFAILIDIS D, CRESTANI F. Cluster-based joint matrix factorization hashing for cross-modal retrieval[C]//SIGIR 2016: Proceedings of the 39th International ACM SIGIR Conference on Research and Development in Information Retrieval. New York: ACM, 2016: 781-784. |

| [15] | ZHAO F, HUANG Y, WANG L, et al. Deep semantic ranking based hashing for multi-label image retrieval[C]//Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ: IEEE, 2015: 1556-1564. |