随着互联网技术的发展以及个人手持设备的普及,互联网上的图像、视频数据正呈指数增长。互联网公司一方面希望能够方便有效地管理互联网上的海量图像数据;另一方面希望适应用户的搜索习惯,即基于文本的图像搜索(Text-Based Image Retrieval,TBIR)方式。互联网公司为每张图像添加相应的标签信息,即图像标注。目前采用的比较成熟的方法是提取图片所在网页的上下文信息作为标签信息[1],但是存在噪声多、没有上下文文本信息等诸多问题。与此同时,在城市安全中,对监控视频的场景的标注也得到越来越多的关注,进而有专家学者提出根据图像本身的视觉信息进行图像自动标注的方法。

目前图像自动标注的方法主要分为两大类:一是基于统计分类的图像标注方法;二是基于概率模型的图像标注方法。基于分类的标注方法是将图像的每一个标注词看成一个分类,这样图像标注问题就可以看作是图像的分类问题,但是由于每张图片包含多个不同的标注词,因而图像标注问题又属于一个多标签学习(Multi-label Learning)问题。主要的方法有基于支持向量机(Support Vector Machine,SVM)的方法[2-5]、K最近邻(K-Nearest Neighbor,KNN)分类方法[6-7]、基于决策树的方法[8-9]、基于BP神经网络(Back Propagation Neural Network,BPNN)[10]以及深度学习的方法[11-12]等。基于概率的方法主要是通过提取图像(或者图像区域)的视觉信息(如颜色、形状、纹理、空间关系等),然后计算图像的视觉特征与图像标注词之间的联合概率分布,最后利用该概率分布对未标注图像(图像区域)进行标注。主要的方法有Duygulu等[13]和Ballan等[14]提出的机器翻译模型以及主题相关模型[15-17]等。

传统的方法在图像标注领域取得了一定的进展,但是因为需要人工选择特征,从而造成信息缺失,导致标注精度不够,召回率低;而深度学习模型虽然在图像识别分类领域取得了比较高的成就,但是大部分都是针对网络本身或者是针对单标签学习的改进,而针对属于多标签学习的图像标注的应用和改进较少。因此本文根据多标签学习的特点,同时考虑到标注词的分布不均问题,提出基于标注结果改善的多标签学习卷积神经网络模型方法。首先,修改了卷积神经网络的误差函数;然后,构建一个适合图像自动标注的多标签学习卷积神经网络;最后,利用标注词的共生关系对标注结果进行改善。

1 卷积神经网络卷积神经网络(Convolutional Neural Network,CNN)是Fukushima等[18]基于感受野概念提出的神经认知机,并由Le Cun等[19]在MNIST(Mixed National Institute of Standards and Technology database)手写数字数据集上取得突破性进展。卷积神经网络采用的局部连接、下采样以及权值共享,一方面能够保留图像的边缘模式信息和空间位置信息,另一方面降低了网络的复杂性。另外卷积神经网络可以通过网络训练出图像特征,很大程度上解决了传统方法中因为人工选择特征导致信息丢失的问题。之后大量科研人员通过调整网络模型结构、修改激活函数、改变池化方法、增加多尺度处理、Dropout方法、mini-batch正则化方法等[20-24]一系列措施使得卷积神经网络的效果更加显著,例如2015年微软亚洲院在ILSVRC(ImageNet Large Scale Visual Recognition Challenge database)图像数据集上的分类错误率首次达到了比人眼识别效果还要低[25]。因此本文尝试利用卷积神经网络在图像特征自学习方面的优势,对图像进行自动标注。

1.1 卷积层与池化层一个典型的卷积神经网络,通常是由输入层、多个交替出现的卷积层和池化层(Pooling)、全连接层以及输出层构成。卷积层是卷积神经网络进行特征抽取的关键部分,每个卷积层可以使用多个不同的卷积核(Kernel),从而得到多个不同的特征图(Feature map)。卷积层的输出如式(1) :

| $x_{j}^{l}=f(\sum\limits_{i\in {{M}_{j}}}{x_{i}^{l-1}*k_{j}^{l}}+b_{j}^{l})$ | (1) |

其中:xjl代表第l层第j个卷积核输出的特征图;Mj代表特征图xl-1的集合;kjl代表第l层的一个卷积核;bjl代表的第l层中xjl特征映射所加的偏置值; f(·)代表非线性函数,*代表的是卷积操作。

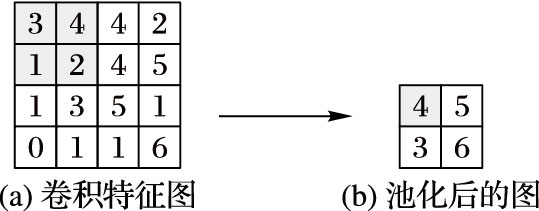

由于在卷积操作过程中存在重复卷积的元素,因此为了减少冗余信息以及快速减低特征维数,在卷积操作之后进行一次池化操作,常用的操作有最大池化、均值池化以及金字塔池化[20]等。经过一次池化操作,特征图维度会减低到原来1/n,n代表池化规模,如图 1所示。

|

图 1 规模为2×2的最大池化示意图 Figure 1 Schematic diagram of 2×2 max pooling |

可以看出,在每一个2×2的池化窗口中选择一个最大的值输出,再经过激活函数(图 1中省略了激活函数),得到一个池化层的特征图。

1.2 基于多标签学习的损失函数在监督学习问题中通过损失函数(loss function)来度量输出的预测值与真实值之间的错误的程度,并且通过求解最小化损失函数,来调整权值。卷积神经网络同样是一种监督学习,通常情况下使用均方误差(Mean Squared Error,MSE)函数作为损失函数,如式(2) ~(3) :

| $E=\frac{1}{m}\sum\limits_{i=1}^{m}{E(i)}$ | (2) |

| $E(i)=\frac{1}{2}\sum\limits_{k=1}^{n}{{{(d_{i}^{k}-y_{i}^{y})}^{2}}}$ | (3) |

其中:E(i)是单个样本的训练误差;d(i)是对应输入x(i)的期望输出;y(i)是对应输入x(i)的网络预测输出;m为样本数量。

但是包括该损失函数在内的大多数的损失函数只是等价地考虑某个标签是否属于某一个样本x,而没有区别对待属于样本x的标签和不属于样本x的标签。因此,为了让卷积神经网络能更好地适用于图像自动标注,本文将文献[10]提出的一种排序损失的损失函数(式(4) )应用到卷积神经网络:

| $E=\sum\limits_{i=1}^{m}{{{E}_{i}}}=\sum\limits_{i=1}^{m}{\frac{1}{|{{Y}_{i}}||\overline{{{Y}_{i}}}|}}\sum\limits_{(k,l)\in {{Y}_{i}}\times \overline{{{Y}_{i}}}}{\exp (-(c_{k}^{i}-c_{l}^{i}))}$ | (4) |

其中Yi和Yi分别代表属于和不属于样本i的标签集合。ci是样本i的网络模型输出值集合,然后根据其对应的标签是否是样本i的标注词将其划分成两个部分cki和cli。该误差函数同其他误差函数的区别在于其将样本标签分开来看(属于样本的标签和不属于样本的标签),计算两部分之间的差异大小,考虑到在图像标注中属于样本i的标签k排序等级要比不属于样本i的标签l排序等级要高,也就是说公式中cki的值要比cli的值大得越多越好。最后对ci中的值进行倒叙排序后,属于样本i的标签就会排在最前了,不属于样本i的标签会排在后面。如果有不属于样本的标签排在属于样本的标签前面,则排序误差就越大。

然而在图像标注问题中,由于一个图片样本往往对应多个标注词,而有些标注词会出现在各种不同的场景中,而有些标注词只会在特定的场合才会出现,从而造成各标注词分布是不均匀的。例如:蓝天、树木等词出现的频率要远远高于其他标注词,而像蜥蜴、老虎等词出现的频率则少于其他标注词。因此为了提高对低频词汇的召回率,本文对式(4) 进行了修改:

| $E=\sum\limits_{i=1}^{m}{{{E}_{i}}}=\sum\limits_{i=1}^{m}{\frac{1}{|{{Y}_{i}}||\overline{{{Y}_{i}}}|}}\sum\limits_{(k,l)\in {{Y}_{i}}\times \overline{{{Y}_{i}}}}{\exp (-({{\alpha }_{k}}c_{k}^{i}-c_{l}^{i}))}$ | (5) |

其中αk是一个与词频有关的系数:

| ${{\alpha }_{k}}=\frac{{{L}_{k}}/n}{\max (L/n)}$ | (6) |

其中:Lk是第k个标注词对应的样本数量;n是总的样本数量。为了达到最小化E的训练目的,当αk值较小时,需要增大cki的值,这样会使低频词汇有个更大的输出值。

2 基于标注词共生矩阵的标注改善在图像标注中,同一张图片包含着多个事物(标注词),反之就是说出现在同一张图片中的标注词它们之间是存在某种相关性的,比如说,太阳和蓝天、沙滩和大海等。同样这些标注词之间的相关性有强有弱,本文对所有的样本的标注信息进行统计,得到一个标注词的共生矩阵R:

| ${{R}_{ij}}=S(i,j)/S(i)$ | (7) |

其中:S(i,j)代表标注词i和标注词j同时出现的次数,S(i)表示标注词i出现的次数。通过式(7) 可以看出得到的共生矩阵不是一个对称的矩阵,也就是说标注词i与标注词j之前存在某种联系,但是可能标注词i对标注词j的依赖性更大。例如,有太阳则必然有天空,而出现天空不一定会有太阳的出现。因此本文结合标注词的相关性对网络预测出的结果进行相关性的调整。对卷积神经网络输出的结果C,通过式(8) ,得到最终的模型标注结果O:

| $O=R*C$ | (8) |

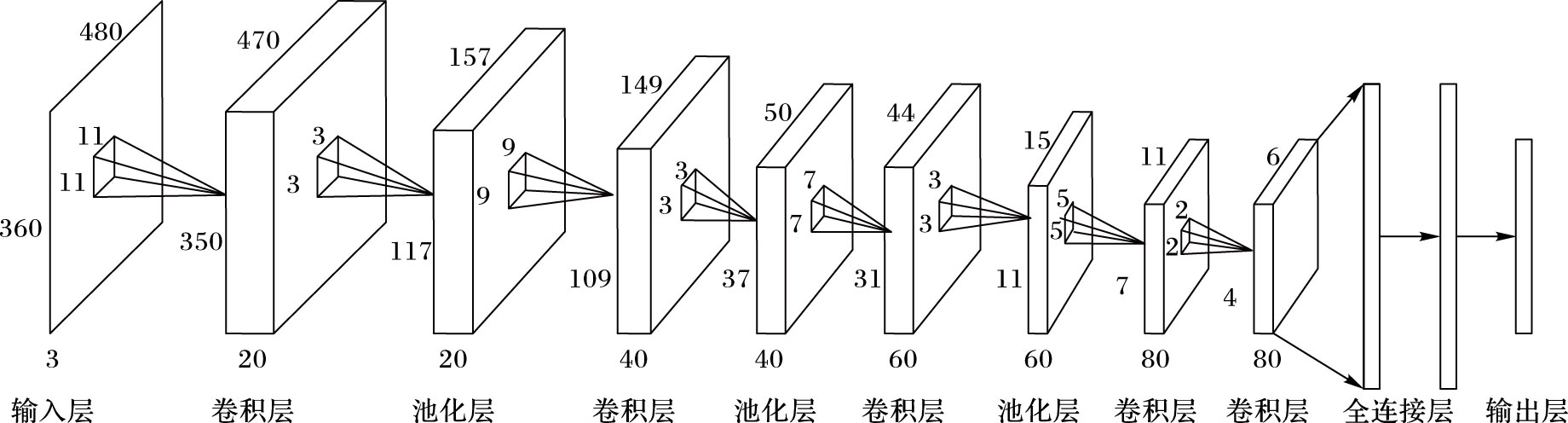

本文采用如图 2所示的一种卷积神经网络结构,输入层是一张完整的图像,分别是R、G、B三个通道;然后通过4个卷积层,卷积核大小分别为11,9,7,5,卷积核个数分别为20,40,60,80;4个采用最大池采样的池化层,池化大小分别为3,3,3,2;最后是两个全连接层,并且为了防止过拟合使用Dropout,概率设置为0.6;输出层节点是224个节点。所有的激活函数均采用的是ReLU激活函数,学习率初始化为0.01。

|

图 2 本文使用的卷积神经网络结构 Figure 2 CNN structure used by this paper |

本文采用的是由imageCLEF组织提供的公开图像标注数据集IAPR TC-12,包含18000张图片,图片大小为480×360,其中训练集包含14000张图片,测试集为4000张图片,包含276个标注词,但是由于某些标注词没有在测试集或者是训练集中出现,所以本文实际用到的标注词是224个,平均每个图片包含4.1个标签。同时该数据集中各标注词分布不均匀,最少的标注词训练样本量只有1个,最大的标注词训练样本量有3834个。

本文首先验证卷积神经网络在特征学习方面要优于传统的人工选择特征,使用SVM、BPNN以及采用MSE误差函数的CNN(Convolutional Neural Network using Mean Square Error function,CNN-MSE)进行对比实验;然后测试基于多标签学习的卷积网络的方法(Multi-Label Learning Convolution Neural Network,CNN-MLL)以及基于标注词共生关系的标注结果改善的方法(下文简称改善的CNN-MLL)有效性与近几年在该数据集上取得效果较好的算法(Sparse Kernel with Continuous Relevance Model (SKL-CRM)[14]、Discrete Multiple Bernoulli Relevance Model with Support Vector Machine (SVM-DMBRM)[4]、Kernel Canonical Correlation Analysis and two-step variant of the classical K-Nearest Neighbor (KCCA-2PKNN)[6]、Neighborhood Set based on Image Distance Metric Learning (NSIDML)[7]等)进行对比。

4.1 评价指标本文采用的是平均准确率P、平均召回率R以及F1,作为实验结果的评价标准,计算式如下:

| $\text{P=}\frac{1}{n}\sum\limits_{i=1}^{n}{\frac{A_{i}^{r}}{A_{i}^{y}}}$ |

| $\text{R}=\frac{1}{n}\underset{i=1}{\overset{n}{\mathop \sum }}\,\frac{A_{i}^{r}}{A_{i}^{d}}$ |

| $\text{F}1=2PR/\left( P+R \right)$ |

其中:Air表示标注词i预测正确的样本个数;Aiy表示预测结果包含标注词i的样本的个数;Aid表示测试样本中包含标注词i的样本的个数。

4.2 实验结果由于BP神经网络以及SVM需要人工提取图像特征进行训练和测试,所以本文参考其他文献[5, 8, 17]中常用的图像特征提取方法,分别提取了图像的Gist特征(特征向量维度为500) 、SIFT(Scale-Invariant Feature Transform)特征(特征向量维度为3250) 、小波纹理特征(向量维度为500) 以及颜色直方图(特征向量维度250) ,并全部经由词包形式转换,组合共5000维特征,并对数据进行归一化处理。

其中SVM采用的核函数是径向基核函数,该核函数在本数据集中表现最好,惩罚系数为0.3;BP神经网络采用的4层的网络结构输入层5000个节点,两个隐藏层节点数分别为3000,1000。

因为样本的平均标注词的个数是4.1,向上取整,所以本文选择5个概率输出值最高的标注词作为每个测试样本的标注结果,然后计算平均准确率和平均召回率。本实验平台处理器采用的是酷睿I5,代码是基于Theano库开发的,基于CNN-MLL方法的网络训练时间与CNN-MSE方法的训练时间相差不大,两种方法的训练时间都在一天左右。各方法实验结果如表 1。

| 表 1 各图像标注方法实验结果 % Table 1 Experimental results of image annotation algorithms % |

表 1中有参考文献的算法的各项数据来源于其文献。通过表 1,可以看出基于CNN-MSE的标注方法在平均准确率和平均召回率上都有很大的提高,平均准确率较BPNN提高了37.9%,平均召回率较SVM提高了12.9%。表明在大数据量的图像数据集中卷积神经网络在特征学习方面要比传统的手工选择特征要好很多,区分度更大。同时通过CNN-MSE和CNN-MLL的实验结果可以看出,采用改进的多标签排序策略的损失函数要比常用的均方误差函数要好,在平均准确率和平均召回率上分别提升15.0%和17.1%。而最后采用标注词间的共生关系对网络标注进行改善,使得平均准确率和平均召回率再次提升6.5%和2.5%。从整体改进上来看本文的方法较普通卷积神经网络平均准确率和平均召回率分别提升了22.5%和20.0%,改进效果明显。另外本文方法在平均准确率上虽然低于其他方法,但是在平均召回率上有很大提升,较2PKNN-ML方法提高了13.5%,同时F1的值也是最高的。

另外为了验证本文对式(3) 的改进的有效性,本文对比了式(3) 的误差函数跟式(4) 的误差函数对低频词汇的召回率情况,这里分别统计了样本量在150以下的标签的平均准确率、平均召回率以及总的平均准确率,结果如表 2。

| 表 2 两种多标签学习误差函数实验结果 % Table 2 Experimental results of two kinds of multi-label learning error function % |

由表 2可知经过改进的误差函数(式(4) )对低频词汇的标注准确率和召回率均远高于没有改进的误差函数(式(3) ),而总体的平均准确率以及平均召回率也稍高于没有改进的误差函数。因此改进方法有效。

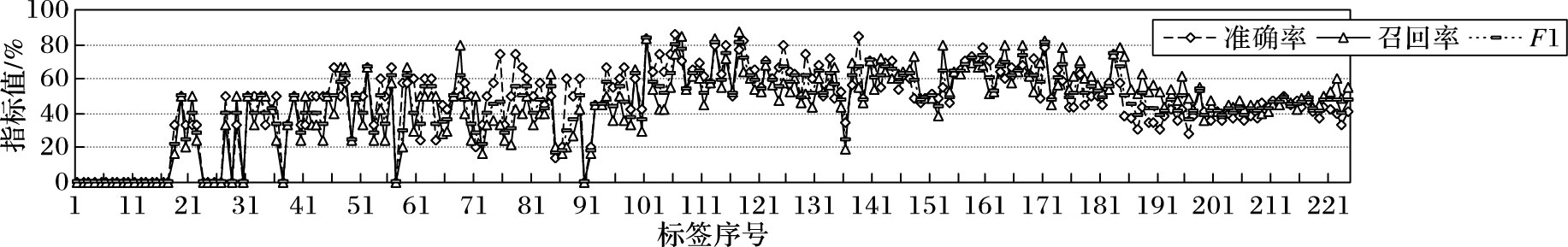

本文考虑到样本的不平衡性,这里给出了基于标注结果改善的CNN-MLL方法得到的每个标注词的准确率和召回率曲线,如图 3所示。

|

图 3 数据集中每个标签的准确率、召回率和F1的值 Figure 3 Accuracy rate,recall rate and F1 value of each label in dataset |

图 3中的标签序号是根据标签对应的训练样本的数量从小到大排序得到的顺序号。当训练样本数量只有1~10时(1~17号标签),曲线的值都是0,主要是这里的训练样本数量占比太少,在训练的时候基本被忽略了;样本数量在100~300时(101~181号标签),曲线的值基本要高于其他部分的值,平均能达到60%以上,这部分样本数量分布比较均匀,测试样本数量也基本维持在50左右,同时这部分词汇大都是一些具体的事物,特征比较明显。但是也有例外的像110号、135号、153号等标签分别是generic-objects、construction-other以及mammal-other等,这些标签虽然也是具体事物但是包含的东西较广泛,而且与其他标注词偶有类似,因此导致识别度也较低。而在181号标签往后,曲线的值开始下降但是较稳定,主要是这部分标注词对应的训练样本的数据量较大,且各样本的数量差距较大(在300~3000) ,同样的这些标注词对应的测试样本的数据量也较大且不均匀;同时这些词汇都是一些高频词汇像蓝天、树木、人群以及一些抽象的词汇等,虽然训练样本多,但是同一标注词在不同样本中彼此视觉差异性较大,因此误判的较多。另外通过图 3还可以看出在142号标签之后召回率开始比准确率的值要高。

通过上述分析,不难看出标注图像样本库的人工标注精度、样本差异性大小以及样本数量分布均衡性等,对标注实验影响很大。

5 结语本文提出的基于多标签学习的卷积神经网络以及结合标注词共生关系对标注结果进行改善的模型,在IAPR TC-12大规模图像自动标注数据集的实验中,本文方法较SVM以及BPNN方法标注的准确率和召回率均有明显提高,相比与目前标注效果较好算法在准确率有所下降,但是在召回率上有一定的提升。综合准确率和召回率来看,本文方法在标注性能上有所提升,证明本文方法是有效的。

进一步的工作拟在以下两方面进行:1) 针对卷积神经网络的结构作优化调整;2) 尝试在全连接层添加人工特征,补充特征信息以提高标注的准确率。

| [1] | 许红涛, 周向东, 向宇, 等. 一种自适应的Web图像语义自动标注方法[J]. 软件学报, 2010, 21 (9) : 2186-2195. ( XU H T, ZHOU X D, XIANG Y, et al. Adaptive model for Web image semantic automatic annotation[J]. Journal of Software, 2010, 21 (9) : 2186-2195. ) |

| [2] | YANG C B, DONG M, HUA J. Region-based image annotation using asymmetrical support vector machine-based multiple instance learning[C]//Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. Washington, DC:IEEE Computer Society, 2006:2057-2063. |

| [3] | GAO Y, FAN J, XUE X, et al. Automatic image annotation by incorporating feature hierarchy and boosting to scale up SVM classifiers[C]//Proceedings of the 2006 ACM International Conference on Multimedia. New York:ACM, 2006:901-910. |

| [4] | MURTHY V N, CAN E F, MANMATHA R. A hybrid model for automatic image annotation[C]//Proceedings of the 2014 ACM International Conference on Multimedia Retrieval. New York:ACM, 2014:369. |

| [5] | 吴伟, 聂建云, 高光来. 一种基于改进的支持向量机多分类器图像标注方法[J]. 计算机工程与科学, 2015, 37 (7) : 1338-1343. ( WU W, NIE J Y, GAO G L. Improved SVM multiple classifiers for image annotation[J]. Computer Engineering & Science, 2015, 37 (7) : 1338-1343. ) |

| [6] | MORAN S, LAVRENKO V. Sparse kernel learning for image annotation[C]//Proceedings of the 2014 International Conference on Multimedia Retrieval. New York:ACM, 2014:113. |

| [7] | VERMA Y, JAWAHAR C V. Image annotation using metric learning in semantic neighbourhoods[M]//ECCV'12:Proceedings of the 12th European Conference on Computer Vision. Berlin:Springer, 2012:836-849. |

| [8] | HOU J, CHEN Z, QIN X, et al. Automatic image search based on improved feature descriptors and decision tree[J]. Integrated Computer Aided Engineering, 2011, 18 (2) : 167-180. |

| [9] | 蒋黎星, 侯进. 基于集成分类算法的自动图像标注[J]. 自动化学报, 2012, 38 (8) : 1257-1262. ( JIANG L X, HOU J. Image annotation using the ensemble learning[J]. Acta Automatica Sinica, 2012, 38 (8) : 1257-1262. doi: 10.3724/SP.J.1004.2012.01257 ) |

| [10] | ZHANG M L, ZHOU Z H. Multilabel neural networks with applications to functional genomics and text categorization[J]. IEEE Transactions on Knowledge & Data Engineering, 2006, 18 (10) : 1338-1351. |

| [11] | READ J, PEREZCRUZ F. Deep learning for multi-label classification[J]. Machine Learning, 2014, 85 (3) : 333-359. |

| [12] | WU F, WANG Z H, ZHANG Z F, et al. Weakly semi-supervised deep learning for multi-label image annotation[J]. IEEE Transactions on Big Data, 2015, 1 (3) : 109-122. doi: 10.1109/TBDATA.2015.2497270 |

| [13] | DUYGULU P, BARNARD K, DE FREITAS J F G, et al. Object recognition as machine translation:learning a lexicon for a fixed image vocabulary[C]//ECCV 2002:Proceedings of the 7th European Conference on Computer Vision. Berlin:Springer, 2002:97-112. |

| [14] | BALLAN L, URICCHIO T, SEIDENARI L, et al. A cross-media model for automatic image annotation[C]//Proceedings of the 2014 International Conference on Multimedia Retrieval. New York:ACM, 2014:73. |

| [15] | WANG C, BLEI D, LI F F. Simultaneous image classification and annotation[C]//Proceedings of the 2009 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. Washington, DC:IEEE Computer Society, 2009:1903-1910. |

| [16] | 李志欣, 施智平, 李志清, 等. 融合语义主题的图像自动标注[J]. 软件学报, 2011, 22 (4) : 801-812. ( LI Z X, SHI Z P, LI Z Q, et al. Automatic image annotation by fusing semantic topics[J]. Journal of Software, 2011, 22 (4) : 801-812. doi: 10.3724/SP.J.1001.2011.03742 ) |

| [17] | 刘凯, 张立民, 孙永威, 等. 利用深度玻尔兹曼机与典型相关分析的自动图像标注算法[J]. 西安交通大学学报, 2015, 49 (6) : 33-38. ( LIU K, ZHANG L M, SUN Y W, et al. An automatic image algorithm using deep Boltzmann machine and canonical correlation analysis[J]. Journal of Xi'an Jiaotong University, 2015, 49 (6) : 33-38. ) |

| [18] | FUKUSHIMA K, MIYAKE S. Neocognitron:a new algorithm for pattern recognition tolerant of deformations and shifts in position[J]. Pattern Recognition, 1982, 15 (6) : 455-469. doi: 10.1016/0031-3203(82)90024-3 |

| [19] | LE CUN Y, BOSER B, DENKER J S, et al. Handwritten digit recognition with a back-propagation network[M]. San Francisco, CA: Morgan Kaufmann Publishers, 1990 : 396 -404. |

| [20] | KRIZHEVSKY A, SUTSKEVER I, HINTON G E. ImageNet classification with deep convolutional neural networks[M]. . |

| [21] | HE K, ZHANG X, REN S, et al. Spatial pyramid pooling in deep convolutional networks for visual recognition[C]//ECCV 2014:Proceedings of the 13th European Conference on Computer Vision. Berlin:Springer, 2014:346-361. |

| [22] | SZEGEDY C, LIU W, JIA Y, et al. Going deeper with convolutions[C]//Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition. Piscataway, NJ:IEEE, 2015:1-9. |

| [23] | HE K, ZHANG X, REN S, et al. Delving deep into rectifiers:surpassing human-level performance on ImageNet classification[C]//Proceedings of the 2015 IEEE International Conference on Computer Vision. Washington, DC:IEEE Computer Society, 2015:1026-1034. |

| [24] | IOFFE S, SZEGEDY C. Batch normalization:accelerating deep network training by reducing internal covariate shift[C]//Proceedings of the 32nd International Conference on Machine Learning. Washington, DC:IEEE Computer Society, 2015:448-456. |

| [25] | JIN C, JIN S W. Image distance metric learning based on neighborhood sets for automatic image annotation[J]. Journal of Visual Communication and Image Representation, 2016, 34 : 167-175. doi: 10.1016/j.jvcir.2015.10.017 |