ZHANG Lei, born in 1974, M.S., lecturer. Her research interests include computer network, information safety, data mining

ZHU Yixin, born in 1974, Ph. D., lecturer. His research interests include computer network safety, complex network communication

XU Chun, born in 1977, Ph. D., associate professor. Her research interests include computer network, natural language processing

YU Kai, born in1974, Ph. D., associate professor. His research interests include complex network, information dissemination

随着计算机信息科学的飞速发展,软件技术已经渗透到科学、工业、经济和文化的各个领域,对人类社会的进步和发展起着举足轻重的作用,同时影响和改变着人们工作、学习和生活的方式[1]。一方面,软件技术的发展和应用水平已经成为衡量一个国家科学技术实力的标志,但是另一方面,随着软件规模、复杂度、系统集成度的不断上升,软件中存在的缺陷问题大大影响了产品开发的质量和效率[2-3],甚至历史上一些由于软件缺陷而造成的事故也频频发生,比如1962年美国国家宇航局(National Aeronautics and Space Administration, NASA)马里纳1号空间探测器由于Fortran语言少写了一个连字符造成火箭偏离,其损失约8000万美元;20世纪80年代由于错误的软件导致对癌症患者的致命过量辐射事故;20世纪90年代丹麦机场行李处理系统的缺陷导致行李箱损坏,为此花费大量金钱及人力恢复正常运营等。

软件缺陷[4-5]通常指计算机软件或者代码中能引起失效的错误的编码。在软件工程领域,合理地预测缺陷能够有助于及时找出未被发现但是真实存在的缺陷以及缺陷分布[6],因此不仅可以节约大量的成本,提高产品质量,还能够客观地评价测试结果,让开发者合理地权衡潜在的预测风险和测试成本之间的关系,便于科学地进行软件测试工作。虽然不同度量元的数据采集方法不同,但在预测算法中对不同的度量元并不区分处理,预测算法具有通用性。

软件缺陷检测技术[7]分为动态检测和静态检测技术。前者主要靠研究人员通过统计分析、经验研究等方式来考察软件缺陷随软件周期的分布,一些软件的可靠性模型便是基于这类技术;后者通过分析利用软件的规模、复杂度、软件缺陷的度量元等特征数据来预测软件中潜在的缺陷。本文的研究内容针对后者,即静态的软件缺陷检测技术。比如来源于NASA的著名软件度量程序(Metric Data Program, MDP)[8],其主要针对于软件度量元数据的收集、组织、存储等情况,这个公开项目已经成为软件缺陷检测领域经典的数据集。

1 相关算法目前,机器学习算法得到了飞速的发展,其中一些具有代表性的算法已被用于软件缺陷检测领域,比如支持向量机(Support Vector Machine,SVM)[9-10] 、分类回归树(Classification and Regression Tree, CART)[11]、人工神经网络(Artificial Neural Network, ANN)[12]、主成分分析(Principal Component Analysis, PCA)[13-14]、聚类分析(Cluster Analysis, CA)[15-16]、Logistics回归(Logistics Regression, LR)方法[17-18]等。

字典学习方法[19-20]实质上基于稀疏表示方法(Sparse Representation),其通过训练样本构造结构化的字典,使用学习得到的结构化字典对样本进行线性编码,然后利用编码之后的重构误差对样本进行分类,其已被广泛用在信号压缩、图像分类等领域。从目前来看,尽管经典的机器学习方法已经被广泛用于软件缺陷检测领域,但是基于字典学习的方法仍未被利用。从本质上来看,软件度量数据集中的数据依然可以看成是某种形式的信号,因此基于字典学习的算法完全可以应用在软件缺陷检测领域。

常规的字典学习算法不能有效利用数据集之中不同类样本的鉴别性质,具体表现在不能有效构造具有鉴别性质的结构化字典。本文提出了一种基于鉴别字典学习(Discriminant Dictionary Learning, DDL)的软件缺陷检测方法,其通过在传统的字典学习模型目标函数之中加入鉴别约束项来构造具有鉴别性质的字典原子以提高模型的分类性能。

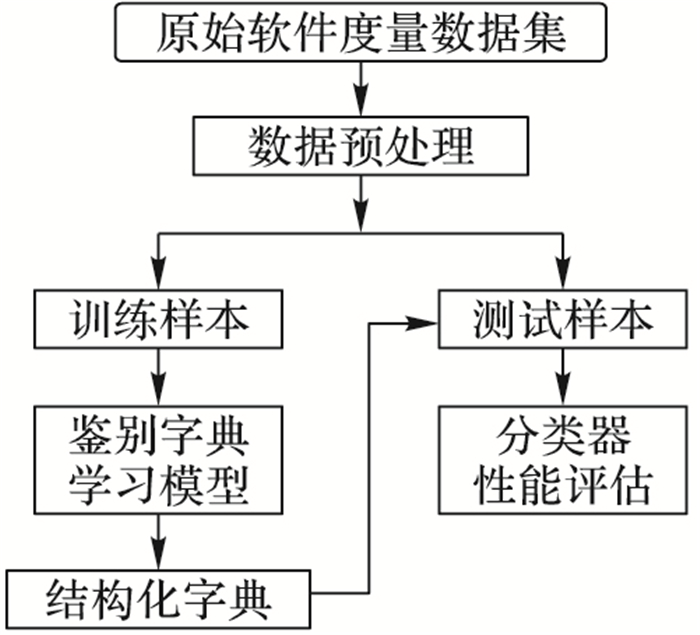

2 本文算法传统的字典学习模型没有较好的鉴别能力,其仅简单地考虑整体字典对于某个数据样本的表示,而没有考虑不同类字典之间的表示关系。本文对传统的字典学习模型进行两点改进:1) 在字典学习模型中,整体字典对某一个样本应该具有较强的表示能力,同时,如果单独考虑这个样本所在类的字典应该也对其具有较强的表示能力,而该类字典对其他类样本则表示能力很差,因此通过引入鉴别约束项可以满足此条件,以达到很好的鉴别性能;2) 字典学习的目的是通过求解表示系数对样本进行表示,因此可以考虑使用字典对不同类样本进行稀疏表示时,它们的表示系数之间应该具有较强的区分能力,从而进一步达到鉴别的目的。本文所提出的基于字典学习的软件缺陷检测流程如图 1所示。

|

图 1 基于字典学习的软件缺陷检测流程 |

稀疏表示最早在信号处理领域中得到应用。由于小波变换和傅里叶变换技术的发展,科研人员需要处理的数据维数越来越高,稀疏表示分类(Sparse Representation Classification, SRC)技术开始引起了研究人员的极大兴趣,并逐步发展形成了一个独立性的理论方法,并且推广到了机器学习、图像处理及模式识别等领域[21]。假设存在一个样本集X={x1, x2, …, xN}∈Rm×n, 其中xi表示X中的某一个样本,根据稀疏表示理论xi可以通过样本集X中的样本以线性组合的形式进行表示:

| $ {\boldsymbol{x}_i} = \sum\limits_{j = 1}^N {{d_{i,j}}{x_j} = } \boldsymbol{X}{\boldsymbol{d}_i} $ | (1) |

其中:

di, j为表示系数,di=[di, 1, di, 2, …, di, t-1, 0, di, t+1, …, di, j-1, di, j, di, j+1, …, diN]T是稀疏系数向量,0表示xi不参与重构。系数d可以转化为如下形式的优化问题:

| $ \begin{gathered} \mathop {\min }\limits_{{\boldsymbol{d}_i}} {\left\| {{\boldsymbol{d}_i}} \right\|_0} \hfill \\ s.t.\;\;\;\;{\boldsymbol{x}_i} = \boldsymbol{X}{\boldsymbol{d}_i} \hfill \\ \end{gathered} $ | (2) |

式(2)是一个NP难问题,较难求解,可以使用l1范数取代l0范数转化得到下式等价的优化问题:

| $ \begin{gathered} \mathop {\min }\limits_{{\boldsymbol{d}_i}} {\left\| {{\boldsymbol{d}_i}} \right\|_1} \hfill \\ s.t.\;\;\;\;{\boldsymbol{x}_i} = \boldsymbol{X}{\boldsymbol{d}_i} \hfill \\ \end{gathered} $ | (3) |

由于噪声和观察值误差的存在,可以放宽上述优化问题的约束条件,得到下式优化问题。

| $ \begin{gathered} \mathop {\min }\limits_{{\boldsymbol{d}_i}} {\left\| {{\boldsymbol{d}_i}} \right\|_1} \hfill \\ s.t.\;\;\;\;\left\| {{\boldsymbol{x}_i} = \boldsymbol{X}{\boldsymbol{d}_i}} \right\| < \varepsilon \hfill \\ \end{gathered} $ | (4) |

其中ε为误差阈值。式(4)可以通过标准线性规划求解。

3.2 鉴别字典学习方法传统的字典学习方法难以学习具有鉴别表示能力的结构化的字典,因为其所有的训练样本共享一个字典集合,本文通过添加鉴别项对其进行改进,最终在学习过程中得到一个结构化的鉴别字典D=D1, D2, …, Di, …, Dc], 其中Di是关于第i类样本的子字典,c是样本的总个数,在训练鉴别的结构化字典之后对数据集进行稀疏表示重构,最后通过重构的误差来进行分类工作,这一过程和SRC分类器类似。

定义A=A1, A2, …, Ai, …, Ac]为给定的训练样本集合,其中Ai为第i类的子样本集合,定义X=X1, X2, …, Xi, …, Xc]为A关于字典集合D的表示系数矩阵,即使用这些系数对于样本集合进行线性组合表示:

| $ \boldsymbol{A} \approx \boldsymbol{DX} $ | (5) |

其中, Xi是第i类样本Ai关于D集合训练得到的表示系数。在本文提出的鉴别字典模型中,不仅要求字典D能够对整体的原始样本集合A有较强的重构能力,同时也需要对于A中每个不同的类别有较强的鉴别能力,基于此设计了包含字典鉴别项的学习模型,其数学模型如下:

| $ {\boldsymbol{J}_{\left( {\boldsymbol{D},\boldsymbol{X}} \right)}} = \mathop {\arg \min }\limits_{\left( {\boldsymbol{D},\boldsymbol{X}} \right)} \left\{ {r\left( {\boldsymbol{A},\boldsymbol{D},\boldsymbol{X}} \right) + \lambda {{\left\| \boldsymbol{X} \right\|}_1}} \right\} $ | (6) |

其中r(A, D, X)是鉴别精确项,其能够较好地衡量样本的鉴别能力,‖X‖1为稀疏表示系数;参数是一个平衡因子。对于某一类样本的稀疏表示系数Xi可以表示为Xi=Xi1, Xi2, …, Xic], 其中Xij是Ai关于子字典Dj的编码系数矩阵。定义字典Dk对Ai的稀疏表示为:

| $ {\boldsymbol{R}_k} = {\boldsymbol{D}_k}\boldsymbol{X}_i^k $ | (7) |

鉴别字典学习模型首先需要使整体字典D能够尽可能近似地表示出任一类样本集合Ai, 因此需要满足下式:

| $ {\boldsymbol{A}_i}\simeq{\text{【}}\boldsymbol{D}{\boldsymbol{X}_i} = {\boldsymbol{D}_1}\boldsymbol{X}_i^1 + {\boldsymbol{D}_2}\boldsymbol{X}_i^2 + \cdots + {\boldsymbol{D}_i}\boldsymbol{X}_i^i + \cdots + {\boldsymbol{D}_c}\boldsymbol{X}_i^c $ | (8) |

其次,由于子字典Di是关于第i类的,显然希望尽可能地使得第i类样本Ai也能够由相应类的子字典Di (非Dj)进行近似表示,此时误差项‖Ai-DXi‖F2及‖Ai-DiXii‖F2均应该最小化;而由不同类的字典表示的值‖DjXij‖F2较小。根据以上的分析,最终本文将鉴别项定义为:

| $ \begin{gathered} r\left( {\boldsymbol{A},\boldsymbol{D},\boldsymbol{X}} \right) = \sum\limits_{i = 1}^c {r\left( {{\boldsymbol{A}_i},\boldsymbol{D},{\boldsymbol{X}_i}} \right)} = \\ \sum\limits_{i = 1}^c {\left( {\left\| {{\boldsymbol{A}_i} - \boldsymbol{D}{\boldsymbol{X}_i}} \right\|_F^2 + \left\| {{\boldsymbol{A}_i} - {\boldsymbol{D}_i}\boldsymbol{X}_i^i} \right\|_F^2 + } \right.} \\ \left. {\sum\limits_{j = 1,j \ne i}^c {\left\| {{\boldsymbol{D}_j}\boldsymbol{X}_i^j} \right\|_F^2} } \right) \\ \end{gathered} $ | (9) |

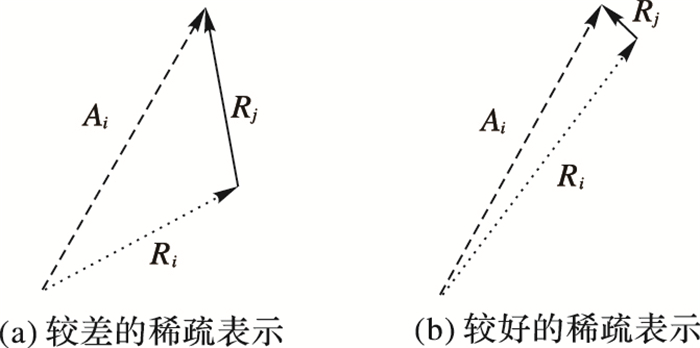

图 2对式(9)中的各项给予直观的显示。其中图(a)表示如果仅最小化‖Ai-DXi‖F2项,那么表示后的样本Ri将可能偏离原始样本Ai,这样会造成子字典Di并不能较好地表示样本Ai。因此为了使模型能够达到较强的样本重构能力同时兼具较强的鉴别性质,本文添加另外两个约束项‖Ai-DiXii‖F2及‖DjXij‖F2,这两项在提出的目标函数中均要求进行最小化。因此,相应的图(b)表示本文所设计的鉴别模型够较好地解决上述问题。

|

图 2 鉴别项示意图 |

为了进一步改善字典D的鉴别能力,本文考虑在稀疏表示项中添加Fisher鉴别约束项f(X), 以使得使用字典D对图像集A进行表示时有较好的区分能力。因此可以定义关于表示系数矩阵X的类内、类间散度矩阵如下:

| $ \begin{gathered} {S_W}\left( \boldsymbol{X} \right) = \sum\limits_{i = 1}^c {\sum\limits_{{\boldsymbol{x}_k} \in {\boldsymbol{X}_i}} {\left( {{\boldsymbol{x}_k} - {\boldsymbol{m}_i}} \right){{\left( {{\boldsymbol{x}_k} - {\boldsymbol{m}_i}} \right)}^T}} } \hfill \end{gathered} $ | (10) |

| $ \begin{gathered} {S_B}\left( \boldsymbol{X} \right) = \sum\limits_{i = 1}^c {{n_i}\left( {{\boldsymbol{m}_i} - \boldsymbol{m}} \right){{\left( {{\boldsymbol{m}_i} - \boldsymbol{m}} \right)}^T}} \hfill \end{gathered} $ | (11) |

其中:mi表示第i类表示系数的均值,xk表示第i类中一个样本表示系数,m为总的样本表示均值,ni是第i类样本的个数。Fisher鉴别准则的目标函数通常可以化简为tr(SW(X))/tr(SB(X))的形式,其中tr( )表示矩阵的迹。另外也有一些专家提出了Fisher鉴别的另一种形式,即散度差形式:

| $ f\left( \boldsymbol{X} \right) = tr\left( {{S_W}\left( \boldsymbol{X} \right)} \right) - a \cdot tr\left( {{\boldsymbol{S}_B}\left( \boldsymbol{X} \right)} \right) $ | (12) |

在本文的目标函数中采用散度差的约束形式以便于优化推导,同时取a的值为1。因此得到的Fisher鉴别项为:

| $ f\left( \boldsymbol{X} \right) = tr\left( {{S_W}\left( \boldsymbol{X} \right)} \right) - tr\left( {{S_B}\left( \boldsymbol{X} \right)} \right) $ | (13) |

式(13)中的目标函数是非凸的,并且不够稳定,本文引入弹性项‖X‖F2, 以使得f(X)能够平滑且收敛;而引入弹性项的另外一个好处是可以和式(6)中的‖X‖1融合,以便在满足稀疏约束的同时使f(X)更加稳定。因此添加Fisher鉴别约束项后的目标函数由式(6)变换为:

| $ \begin{gathered} \mathop {\min }\limits_{\left( {D,X} \right)} \left\{ {r\left( {\boldsymbol{A},\boldsymbol{D},\boldsymbol{X}} \right) + {\lambda _1}{{\left\| \boldsymbol{X} \right\|}_1} + } \right. \hfill \\ \left. {\left. {{\lambda _2}\left( {tr\left( {{S_W}\left( \boldsymbol{X} \right)} \right) - tr\left( {{S_B}\left( \boldsymbol{X} \right)} \right)} \right) + \eta \left\| \boldsymbol{X} \right\|_F^2} \right)} \right\} \hfill \\ s.t.\;\;\;\;{\left\| {{\boldsymbol{d}_n}} \right\|_2} = 1,\forall n \hfill \\ \end{gathered} $ | (14) |

式(14)可以表示为如下形式:

| $ \begin{gathered} \mathop {\min }\limits_{\left( {\boldsymbol{D},\boldsymbol{X}} \right)} \sum\limits_{i = 1}^c {\left( {\left\| {{\boldsymbol{A}_i} - \boldsymbol{D}{\boldsymbol{X}_i}} \right\|_F^2 + \left\| {{\boldsymbol{A}_i} - \boldsymbol{DX}_i^i} \right\|_F^2} \right) + {\lambda _1}{{\left\| \boldsymbol{X} \right\|}_1} + } \hfill \\ \left. {{\lambda _2}\left( {tr\left( {{S_W}\left( \boldsymbol{X} \right)} \right) - tr\left( {{S_B}\left( \boldsymbol{X} \right)} \right) + \eta \left\| \boldsymbol{X} \right\|_F^2} \right)} \right\} \hfill \\ s.t.\;\;\;\;{\left\| {{\boldsymbol{d}_n}} \right\|_2} = 1,\forall n;\left\| {{\boldsymbol{\boldsymbol{D}}_j}\boldsymbol{X}_i^j} \right\|_F^2 \leqslant \varepsilon ,\forall i \ne j \hfill \\ \end{gathered} $ | (15) |

式(15)中‖DjXij‖F2≤ε为约束项,其表示使得非本类的字典对该类样本表示的效果很差。式(15)的表示形式较为复杂,考虑到‖DjXij‖F2的值应该很小,近似为0,而且从物理意义来看,其相当于Xij=0。因此式(15)可以化简为:

| $ \begin{gathered} \mathop {\min }\limits_{\left( {\boldsymbol{D},\boldsymbol{X}} \right)} \sum\limits_{i = 1}^c {\left( {\left\| {{\boldsymbol{A}_i} - \boldsymbol{D}{\boldsymbol{X}_i}} \right\|_F^2 + \left\| {{\boldsymbol{A}_i} - \boldsymbol{DX}_i^i} \right\|_F^2} \right) + } \hfill \\ \;\;\;\;\;{\lambda _1}{\left\| \boldsymbol{X} \right\|_1} + {\lambda _2}\left( {tr\left( {{S_W}\left( \boldsymbol{X} \right)} \right) - tr\left( {{S_B}\left( \boldsymbol{X} \right)} \right) + } \right. \hfill \\ \;\;\;\;\;\left. {\left. {\eta \left\| \boldsymbol{X} \right\|_F^2} \right)} \right\} \hfill \\ s.t.\;\;\;\;{\left\| {{\boldsymbol{d}_n}} \right\|_2} = 1,\forall n;\boldsymbol{X}_i^j{\text{ = }}0,\forall i \ne j \hfill \\ \end{gathered} $ | (16) |

下面考虑对式(16)进行化简,首先介绍定理1,然后在定理2中给出式(16)的化简过程。

定理1

证明 首先定义mii、mi和m分别为Xii、Xi和X的平均向量。考虑到对于i≠j时有Xij=0, 因此可以重写为:mi=[0;…; mii; …; 0]和m=[n1m11; …; nimii; …; ncmcc]/n。

此时

| $ \begin{gathered} tr\left( {{S_B}\left( \boldsymbol{X} \right)} \right){\text{ = }} \hfill \\ \;\;\;\;\sum\limits_{i = 1}^c {{n_i}/{n^2}\left\| {\left[ { - {n_1}\boldsymbol{m}_1^1; \cdots ;\left( {n - {n_i}} \right)\boldsymbol{m}_i^i; \cdots ; - {n_c}\boldsymbol{m}_c^c} \right]} \right\|_2^2 = } \hfill \\ \;\;\;\;\sum\limits_{i = 1}^c {{\kappa _i}{n_i}\left\| {\boldsymbol{m}_i^i} \right\|_2^2} \hfill \\ \end{gathered} $ | (17) |

定理2 式(16)可以化简为如下形式:

| $ \begin{gathered} \mathop {\min }\limits_{\left( {\boldsymbol{D},\boldsymbol{X}} \right)} \sum\limits_{i = 1}^c {\left( {\left\| {{\boldsymbol{A}_i} - {\boldsymbol{D}_i}\boldsymbol{X}_i^i} \right\|_F^2 + {\lambda _1}^\prime {{\left\| {\boldsymbol{X}_i^i} \right\|}_1}} \right. + } \hfill \\ \;\;\;\;\;\left. {{\lambda _2}^\prime \left\| {\boldsymbol{X}_i^i - \boldsymbol{M}_i^i} \right\| + {\lambda _3}^\prime \left\| {\boldsymbol{X}_i^i} \right\|_F^2} \right) \hfill \\ s.t.\;\;\;\;{\left\| {{\boldsymbol{d}_n}} \right\|_2} = 1,\forall n \hfill \\ \end{gathered} $ | (18) |

其中各项系数的定义为:λ′1=λ1/2;λ′2=λ2(1+κi)/2;κi=1-ni/n; λ′3=λ2(η-κi)/2;Mii是由mii为列向量组成的矩阵;其他相关定义可参考定理1中的证明。

证明 类似于定理1,类内散度矩阵可以化简为:

| $ {S_W}\left( \boldsymbol{X} \right) = \sum\limits_{i = 1}^c {\sum\limits_{{\boldsymbol{x}_k} \in {\boldsymbol{X}_i}} {\left( {\boldsymbol{x}_k^i - \boldsymbol{m}_i^i} \right){{\left( {\boldsymbol{x}_k^i - \boldsymbol{m}_i^i} \right)}^T}} } $ |

相应的类内散度矩阵的迹为:

| $ tr\left( {{S_W}\left( \boldsymbol{X} \right)} \right) = \sum\limits_{i = 1}^c {\sum\limits_{{\boldsymbol{x}_k} \in {\boldsymbol{X}_i}} {\left\| {\boldsymbol{x}_k^i - \boldsymbol{m}_i^i} \right\|_2^2} } $ |

结合定理1,可以将f(X)进行化简:

| $ \begin{gathered} f\left( \boldsymbol{X} \right) = \sum\limits_{i = 1}^c {\left( {\sum\limits_{{\boldsymbol{x}_k} \in {\boldsymbol{X}_i}} {\left\| {\boldsymbol{x}_k^i - \boldsymbol{m}_i^i} \right\|_2^2 + } } \right.} \\ {\kappa _i}\left( {\left\| {\boldsymbol{X}_i^i} \right\|_F^2 - {n_i}\left\| {\boldsymbol{m}_i^i} \right\|_F^2} \right) + \left( {\eta - {\kappa _i}} \right)\left\| {\boldsymbol{X}_i^i} \right\|_F^2 \\ \end{gathered} $ | (19) |

定义矩阵Eij=[1]nj×nj, Mii=[mii]1×ni=XiiEii/ni, 此时可以得到:

| $ \begin{gathered} \left\| {\boldsymbol{X}_i^i} \right\|_F^2 - {n_i}\left\| {\boldsymbol{m}_i^i} \right\|_F^2 = \left\| {\boldsymbol{X}_i^i} \right\|_F^2 - \left\| {{{\left[ {\boldsymbol{m}_i^i} \right]}_{1 \times {n_i}}}} \right\|_F^2 = \hfill \\ \;\;\;\;{\text{tr}}\left( {\boldsymbol{X}_i^i\left( {I - \boldsymbol{E}_i^i/{n_i}{{\left( {\boldsymbol{E}_i^i/n} \right)}^T}} \right){{\left( {\boldsymbol{X}_i^i} \right)}^T}} \right) = \hfill \\ \;\;\;\;\left\| {\boldsymbol{X}_i^i - \boldsymbol{M}_i^i} \right\|_F^2 \hfill \\ \end{gathered} $ | (20) |

因此Fisher鉴别项可以化简为:

| $ f\left( \boldsymbol{X} \right) = \sum\limits_{i = 1}^c {\left( {\left( {1 + {\kappa _i}} \right)\left\| {\boldsymbol{X}_i^i - \boldsymbol{M}_i^i} \right\|_F^2 + \left( {\eta - {\kappa _i}} \right)\left\| {\boldsymbol{X}_i^i} \right\|_F^2} \right)} $ |

当i≠j时有Xij=0【,因此‖Ai-DXi‖F2=‖Ai-DiXii‖F2。

综合式(16)以及以上各式,最终定理2得以证明。

3.5 鉴别字典学习模型的优化式(16)中需要求解最终的结构化字典,以使之符合本文提出的算法模型,其优化过程可以通过交替迭代来进行:首先通过固定字典集D来更新系数矩阵X, 然后通固定系数矩阵X来更新字典集D, 通过这种模式来求解所需的结构化字典。

3.5.1 固定字典求解编码系数首先考虑固定字典D, 那么式(16)可以转化为求解一般性的稀疏编码问题。考虑到本文的软件缺陷检测数据集仅包含两类,相应的系数矩阵X=X1, X2], 可以考虑对二者依次迭代求解:通过固定X1来求解X2, 然后通过固定X2来求解X1。式(16)可以改写为:

| $ \mathop {\min }\limits_{{\boldsymbol{X}_i}} \left\{ {r\left( {{\boldsymbol{A}_i},\boldsymbol{D},\boldsymbol{X}} \right) + {\lambda _1}\left\| {{\boldsymbol{X}_i}} \right\| + {\lambda _2}{f_i}\left( {{\boldsymbol{X}_i}} \right)} \right\} $ | (21) |

| $ {f_i}\left( {{\boldsymbol{X}_i}} \right) = \left\| {{\boldsymbol{X}_i} - {\boldsymbol{M}_i}} \right\|_F^2 - \sum\limits_{k = 1}^c {\left\| {{\boldsymbol{M}_k} - \boldsymbol{M}} \right\|_F^2 + \eta \left\| {{\boldsymbol{X}_i}} \right\|_F^2} $ | (22) |

在满足η > κi的情况下式(22)中的目标函数严格凸优化,在本文中取η的值为1,将式(21)改写为如下形式:

| $ \mathop {\min }\limits_{{\boldsymbol{X}_i}} \left\{ {Q\left( {{\boldsymbol{X}_i}} \right) + 2\tau {{\left\| {{\boldsymbol{X}_i}} \right\|}_1}} \right\} $ | (23) |

其中:

| $ Q\left( {{\boldsymbol{X}_i}} \right) = r\left( {{\boldsymbol{A}_i},\boldsymbol{D},\boldsymbol{X}} \right) + {\lambda _2}{f_i}\left( {{\boldsymbol{X}_i}} \right) $ | (23) |

或者:

| $ \begin{gathered} Q\left( {{\boldsymbol{X}_i}} \right) = \left\| {{\boldsymbol{A}_i} - {\boldsymbol{D}_i}\boldsymbol{X}_i^i} \right\|_F^2 + {\lambda _2}^\prime \left\| {\boldsymbol{X}_i^i - \boldsymbol{M}_i^i} \right\|_2^2 + \\ {\lambda _3}^\prime \left\| {\boldsymbol{X}_i^i} \right\|_F^2 \\ \end{gathered} $ | (24) |

| $ \tau = {\lambda _1}/2 $ | (25) |

式(23)可以通过IMP(Iterative Projection Method)以迭代的形式进行求解,为了方便优化,η=κi=1-ni/n, 即λ3′=0。定义迭代步长σ, 步长的选择对于最终的求解比较关键,具体的推导及取值可以参考文献[22]。定义迭代序列数为h, 软阈值因子如式(26)所示(软阈值因子的详细定义及求解过程可以参见文献[23])。

| $ \left[ {{S_{\tau /\sigma }}} \right] = \left\{ \begin{gathered} 0,\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\left\| {{\boldsymbol{x}_j}} \right\| > \tau /\sigma \hfill \\ {\boldsymbol{x}_j} - \operatorname{sign} \left( {{\boldsymbol{x}_j}} \right)\tau /\sigma ,\;\;\;\;\left\| {{\boldsymbol{x}_j}} \right\| > \tau /\sigma \hfill \\ \end{gathered} \right. $ | (26) |

其求解过程如下:

1) 输入:σ和τ, σ > 0, τ > 0。

2) 初始化:Xi(1)=0, h=1。

3) 开始迭代:

①迭代终止条件:前后差值开始收敛或达到最终迭代次数。

②迭代次数h=h+1。

③

4) 返回Xi=Xi(h)。

3.5.2 固定编码系数求解字典在3.4.1节中系数矩阵X被求解后固定下来,可以通过轮流更新迭代求解字典D1和D2。此时可以将式(18)改写为如下形式:

| $ \begin{gathered} {J_{\left( {{\boldsymbol{D}_i}} \right)}} = \mathop {\arg \;\;\min }\limits_{\left( {{\boldsymbol{D}_i}} \right)} \left\{ {\left\| {\boldsymbol{A} - {\boldsymbol{D}_i}{\boldsymbol{X}^i} - \sum\limits_{j = 1,j \ne i}^2 {{\boldsymbol{D}_j}{\boldsymbol{X}^j}} } \right\|_F^2 + } \right. \hfill \\ \;\;\;\;\;\;\;\left. {\left\| {{\boldsymbol{A}_i} - {\boldsymbol{D}_i}\boldsymbol{X}_i^i} \right\|_F^2 + \sum\limits_{j = 1}^2 {\left\| {{\boldsymbol{D}_j}\boldsymbol{X}_i^j} \right\|_F^2} } \right\} \hfill \\ s.t.\;\;\;\;{\left\| {{\boldsymbol{d}_n}} \right\|_2} = 1 \end{gathered} $ | (27) |

这里的Xi为样本集A关于Di的编码系数。式(27)是一个凸优化问题,其求解过程具体可以参考文献[24],由于篇幅所限,本文不再详细介绍。

最终DDL模型的求解步骤如下:。

1) 初始化字典集合D。

2) 固定字典D, 通过3.5.1中的求解过程分别迭代求解系数集合X1及X2。

3) 固定步骤2)中的X1及X2, 根据文献[24]求解D1及D2, 得到最终的结构化字典。

4) 使用SRC分类器对样本进行重构,利用重构之后的误差进行分类工作。

4 实验分析本文通过引入几种重要的分类器性能指标进行实验对比,并选择来源于美国国家宇航局(NASA)的软件度量程序(MDP)作为实验数据。在实验过程中,将选择支持向量机(SVM)、决策树(C4.5)、主成分分析(PCA)、Logistics回归(LR)方法、具有代表性的字典学习方法DLSDP(Dictionary Learning based Software Defect Prediction)[25]这几种算法作为对比算法。本文选择Matlab R2011a作为实验工具,实验所用PC配置为Intel酷睿i7 6700 CPU,32 GB内存。

4.1 数据集介绍本文选择来自NASA的MDP项目进行实验。MDP项目是一个向全球公开的数据度量项目,任何用户均能够从NASA的官网下载其中的数据。MDP项目包含多个软件缺陷数据集子集,本文选择其中的JM1、CM1、KC1、MW1及PC1作为实验对比数据集。以其中的JM1为例,JM1数据集始于某个地面预测系统的软件项目,使用C++进行开发,共有10879个模块、21个软件度量属性以及1维的是否为缺陷的标签,其中21个属性包含总代码行数,McCabe的循环复杂度、基本复杂度、设计复杂度,Halstead程序的级别、容量、工作量、时间、长度等属性。

4.2 分类器性能评价指标表 1中定义了几种分类的行为,其中正确预测为正类(True Positive, TP)表示在分类过程中实际为有缺陷的数据正确分类为有缺陷;错误预测为负类(False Negative, FN)表示实际为有缺陷的数据预测为无缺陷;错误预测为正类(False Positive, FP)表示实际为无缺陷的数据被预测为有缺陷,正确预测为负类(True Negative, TN)定义为无缺陷数据预测正确预测为无缺陷数据。

| 表 1 两类问题分类情况 |

由于最终的检测结果是判断数据是否有缺陷,因此是一个两类问题,仅仅通过分类准确率来评价一个算法的有效性是不够全面的,因此在本文的实验中引入召回率、错误接受率、查准率、准确率以及F-measure值较为全面地衡量分类算法的有效性。以下简单介绍这几种值的定义:

1) 召回率:re=TP/(TP+FN)。召回率是一种重要的分类器性能衡量指标,因为在实际应用中需要重点考虑的是有缺陷的数据,其反映了被正确判定的缺陷样本占总的缺陷样本的比重,即衡量有缺陷样本检测的全面程度。

2) 错误接受率:pf=FP/(FP+TN),其反映了分类结果中无缺陷数据被预测为有缺陷数据的比例。

3) 查准率:pre=TP/(TP+FP), 用来衡量检测到有缺陷样本的准确率。

4) 准确率:acc=(TP+TN)/(TP+FN+FP+TN),用来衡量着所有正确分类的样本占总样本的比例。

从以上定义看出,一个好的分类器预测模型,希望能满足较高的召回率、缺陷检测准确率以及准确率,较低的错误接受率,尤其是查准率和召回率是两类问题中比较重要的指标。然而,实际情况中,查准率和召回率之间往往难以同时都达到较高的值,需要在二者之间寻求权衡,因此需要折中考虑二者。本文引入F-measure值来考虑衡量准确率和召回率的调和平均数,其被定义为:

| $ F - measure = 2*\beta *re*pre/\left( {re + {\beta ^2}pre} \right) $ | (18) |

在实验中取β的值为1,即通常所说的F1-measure值。从以上定义显然可以看出,所有的评估指标的取值范围均在0~1。

4.3 实验结果及分析在实验过程中,对于SVM算法采用径向基核,同时选择惩罚因子C=1来分别进行实验。对于本文提出的DDL模型,取迭代步长σ的值为0.1,λ1的值取0.01,λ2的值为0.005。为了公平地进行实验,在本文的实验中每种算法最终选择随机进行20组交叉实验验证,以合理地进行算法对比,最终得到的re、pf、pre实验结果如表 2所示,acc以及F1-measure如表 3所示。

| 表 2 不同算法在不同数据集上的re、pf、pre对比 |

| 表 3 不同算法在不同数据集上的acc值以及F1-measure值对比 |

从表 2、3可以看出,与其他算法相比,本文提出的DDL算法最终能够将F1-measure提高0.03~0.26,而准确率能够提高0.03~0.20,而召回率、查准率也有一定程度的改善。其主要原因主要是本文提出的字典学习模型具有较强的鉴别分类能力,对比其他几种机器学习方法,字典学习模型本身又有较好的抗干扰能力。而PCA由于其是一种无监督的降维方法,没有有效利用丰富的标签信息,相对于其他集中算法效果最差;LR是一种模型较为简单的回归算法,模型本身不具有鉴别能力,同时从其自身的应该来看,算法泛化能力较差,因此实验效果相对较差;C4.5其容易出现过度拟合的问题,而且其对连续性的字段难以作出准确的预测;对于SVM,其虽然拥有较强的泛化能力,但是需要选择合适的核函数,而SVM本身也具有多种形式的模型,因此如何选择合适的参数也是一个问题,同时SVM也没有很强的鉴别分类能力。

值得一提的是本文选择了DLSDP算法进行对比,可以发现,DLSDP也是一种字典学习方法,由于其在算法中主要使用代价敏感方法来解决类不平衡问题,因此所得到的F-measure值和本文的算法相比很接近;另一方面,由于其没有考虑不同类字典的鉴别性质,因此在检测精度上略差于本文算法。

5 结语为解决传统的字典学习方法不能有效构造具有鉴别能力字典的问题,提出了一种新的基于鉴别字典学习的分类算法,并用于软件缺陷检测,即基于鉴别字典学习的软件缺陷检测算法。该算法首先通过添加鉴别表示项以突出不同类字典对不同类样本的表示能力,其次添加Fisher准则系数鉴别项,使得不同类的表示系数具有较好的鉴别能力;然后通过迭代算法求解相应模型的目标函数,最后选择在MDP数据集上进行实验,获得的实验结果表明能够在改善检测性能的基础上提高检测精度。现实当中二分类经常面临正负样本不平衡的问题,在本文提出的算法中,可以通过引入代价敏感约束进一步提高算法的分类性能,考虑不同类样本数目对分类造成的代价影响。

| [1] |

BAGGEN R, CORREIA J P, SCHILL K, et al. Standardized code quality benchmarking for improving software maintainability[J].

Software Quality Journal, 2012, 20 (2) : 287-307.

doi: 10.1007/s11219-011-9144-9 ( 0) 0)

|

| [2] |

SHEPPERD M, SONG Q, SUN Z, et al. Data quality: some comments on the nasa software defect datasets[J].

IEEE Transactions on Software Engineering, 2013, 39 (9) : 1208-1215.

doi: 10.1109/TSE.2013.11 ( 0) 0)

|

| [3] |

MA Y, LUO G, ZENG X, et al. Transfer learning for cross-company software defect prediction[J].

Information and Software Technology, 2012, 54 (3) : 248-256.

doi: 10.1016/j.infsof.2011.09.007 ( 0) 0)

|

| [4] |

WANG S, YAO X. Using class imbalance learning for software defect prediction[J].

IEEE Transactions on Reliability, 2013, 62 (2) : 434-443.

doi: 10.1109/TR.2013.2259203 ( 0) 0)

|

| [5] |

SONG Q, JIA Z, SHEPPERD M, et al. A general software defect-proneness prediction framework[J].

IEEE Transactions on Software Engineering, 2011, 37 (3) : 356-370.

doi: 10.1109/TSE.2010.90 ( 0) 0)

|

| [6] |

PENG Y, KOU G, WANG G, et al. Ensemble of software defect predictors: an AHP-based evaluation method[J].

International Journal of Information Technology and Decision Making, 2011, 10 (1) : 187-206.

doi: 10.1142/S0219622011004282 ( 0) 0)

|

| [7] |

ZHENG J. Cost-sensitive boosting neural networks for software defect prediction[J].

Expert Systems with Applications, 2010, 37 (6) : 4537-4543.

doi: 10.1016/j.eswa.2009.12.056 ( 0) 0)

|

| [8] |

GRAY D, BOWES D, DAVEY N, et al. Reflections on the NASA MDP data sets[J].

IET Software, 2012, 6 (6) : 549-558.

doi: 10.1049/iet-sen.2011.0132 ( 0) 0)

|

| [9] |

姜慧研, 宗茂, 刘相莹. 基于ACO-SVM的软件缺陷预测模型的研究[J].

计算机学报, 2011, 34 (6) : 1148-1154.

( JIANG H Y, ZONG M, LIU X Y. Research of software defect prediction model based on ACO-SVM[J].

Chinese Journal of Computers, 2011, 34 (6) : 1148-1154.

doi: 10.3724/SP.J.1016.2011.01148 ) ( 0) 0)

|

| [10] |

ELISH K O, ELISH M O. Predicting defect-prone software modules using support vector machines[J].

Journal of Systems and Software, 2008, 81 (5) : 649-660.

doi: 10.1016/j.jss.2007.07.040 ( 0) 0)

|

| [11] |

KHOSHGOFTAAR T M, SELIYA N. Software quality classification modeling using the SPRINT decision tree algorithm [C]// ICTAI '02: Proceedings of the 14th IEEE International Conference on Tools with Artificial Intelligence. Washington, DC: IEEE Computer Society, 2002: 365-374.

( 0) 0)

|

| [12] |

ARAR Ö F, AYAN K. Software defect prediction using cost-sensitive neural network[J].

Applied Soft Computing, 2015, 33 (C) : 263-277.

( 0) 0)

|

| [13] |

ABDI H, WILLIAMS L J. Principal component analysis[J].

Wiley Interdisciplinary Reviews: Computational Statistics, 2010, 2 (4) : 433-459.

doi: 10.1002/wics.v2:4 ( 0) 0)

|

| [14] |

VIDAL R, MA Y, SASTRY S. Generalized Principal Component Analysis (GPCA)[J].

IEEE Transactions on Pattern Analysis and Machine Intelligence, 2005, 27 (12) : 1945-1959.

doi: 10.1109/TPAMI.2005.244 ( 0) 0)

|

| [15] |

SELIYA N, KHOSHGOFTAAR T M. Software quality analysis of unlabeled program modules with semisupervised clustering[J].

IEEE Transactions on Systems, Man and Cybernetics, Part A: Systems and Humans, 2007, 37 (2) : 201-211.

doi: 10.1109/TSMCA.2006.889473 ( 0) 0)

|

| [16] |

BISHNU P S, BHATTACHERJEE V. Software fault prediction using quad tree-based K-means clustering algorithm[J].

IEEE Transactions on Knowledge and Data Engineering, 2012, 24 (6) : 1146-1150.

doi: 10.1109/TKDE.2011.163 ( 0) 0)

|

| [17] |

MA Y, ZHU S, QIN K, et al. Combining the requirement information for software defect estimation in design time[J].

Information Processing Letters, 2014, 114 (9) : 469-474.

doi: 10.1016/j.ipl.2014.03.012 ( 0) 0)

|

| [18] |

GAO K, KHOSHGOFTAAR T M, WANG H, et al. Choosing software metrics for defect prediction: an investigation on feature selection techniques[J].

Software—Practice and Experience, 2011, 41 (5) : 579-606.

doi: 10.1002/spe.1043 ( 0) 0)

|

| [19] |

SMITH L N, ELAD M. Improving dictionary learning: multiple dictionary updates and coefficient reuse[J].

IEEE Signal Processing Letters, 2013, 20 (1) : 79-82.

doi: 10.1109/LSP.2012.2229976 ( 0) 0)

|

| [20] |

YAN R, SHAO L, LIU Y. Nonlocal hierarchical dictionary learning using wavelets for image denoising[J].

IEEE Transactions on Image Processing, 2013, 22 (12) : 4689-4698.

doi: 10.1109/TIP.2013.2277813 ( 0) 0)

|

| [21] |

MAIRAL J, ELAD M, SAPIRO G. Sparse representation for color image restoration[J].

IEEE Transactions on Image Processing, 2008, 17 (1) : 53-69.

doi: 10.1109/TIP.2007.911828 ( 0) 0)

|

| [22] |

MARCHESINI S. Invited article: a unified evaluation of iterative projection algorithms for phase retrieval[J].

Review of Scientific Instruments, 2007, 78 (1) : 011301.

doi: 10.1063/1.2403783 ( 0) 0)

|

| [23] |

LUISIER F, BLU T, UNSER M. A new SURE approach to image denoising: interscale orthonormal wavelet thresholding[J].

IEEE Transactions on Image Processing, 2007, 16 (3) : 593-606.

doi: 10.1109/TIP.2007.891064 ( 0) 0)

|

| [24] |

YANG M, ZHANG L, YANG J, et al. Metaface learning for sparse representation based face recognition [EB/OL]. [2015-11-26]. http://www4.comp.polyu.edu.hk/~cslzhang/paper/conf/ICIP2010/ICIP10_3551_YM.pdf.

( 0) 0)

|

| [25] |

JING X-Y, YING S, ZHANG Z-W, et al. Dictionary learning based software defect prediction [C]// ICSE 2014: Proceedings of the 36th International Conference on Software Engineer. New York: ACM, 2014: 414-423.

( 0) 0)

|